This post was written by one of the stars in our developer community, Thiago Santana.

File-sharing is one of the most elementary ways to perform system integration. In the context of web applications, we call “upload” the process in which a user sends data/files from a local computer to a remote computer.

Sometimes we need to expose, in our REST API, an upload operation that allows the transmission of:

- Binary files (of any kind)

- The meta information related to it (e.g., name, content type, size and so on…)

- Perhaps some additional information for performing business logic processing

But we would like all this information to arrive on the server in the same request. This sounds like a challenge, doesn’t it?

This guide will demonstrate one strategy, among many, to implement this integration scenario using resources provided by Mule components.

SOAP Legacy – Identifying Reusable Concepts

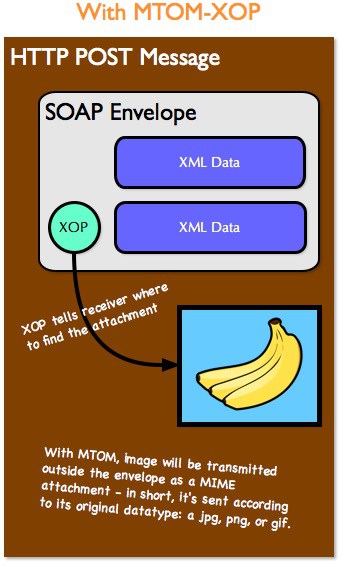

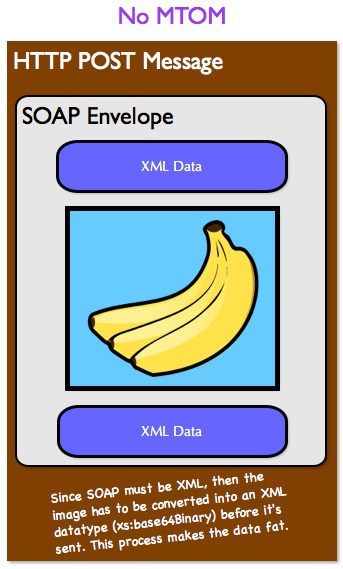

Just to contextualize, this strategy was inspired by the architecture of a legacy project that I needed to maintain. The project needed to transmit binary files in SOAP Web Services, without using MTOM.

When we do not use MTOM with SOAP, and the files are transmitted as a MIME attachment of the payload (similar to the process of sending emails containing attachments [see fig.1]), the implementation of the protocol converts the contents of the file that needs to be transmitted, and the result of this conversion is also a binary string in the Base64 format.

After the conversion, the String is deposited inside an XML tag, which is part of the Body content of the payload that is being transmitted to the remote server [see fig2].

There is an advantage to using this strategy, which is the ability to transmit this tag in the same payload that may contain other information, such as the data needed to process business rules, or better still contain the meta information of the file.

Since all the necessary information is in the same payload, the process of reading and/or parsing the message is simplified. All of this is in a single request handled by the service, which is very important for the processing economy and helps avoid maintaining and/or managing transactions handled by the service, in other words, it conforms to the Stateless behavior.

This is very desirable because it conforms to one of the great features of the HTTP protocol (which was designed to be Stateless) and allows the transaction to be completely processed in one request. As a result, it will not be necessary to request additional information for the service consumer; in addition, we can still do part of the treatment process internally, asynchronously, without prolonging the client response time or timeout.

Finally, this strategy also provides server resource savings since there is no need to request additional information regarding the transaction in progress.

I thought that some of the advantages identified in the architecture applied in the legacy solution context, and so I built a way to port this architecture to a REST API, applying also significant improvements.

Implementation – Porting the Solution to REST API

When defining any type of API, we should consider that, when making use of any interface, consumers usually expect to find availability, simplicity, and stability. Defining a RAML contract is one of the ways to establish guidelines that favor the construction of a REST API which offers simplicity and stability from the start.

Similar to WSDLs, when we define and make available a RAML, we give our consumers a better chance to prepare for it, as well as identify difficulties and provide suggestions for improvements for future versions of the contract. Using ready-made infrastructures, such as the Mulesoft’s Anypoint API Designer, the consumer can also test this RAML by generating an endpoint with mock information (provided in the RAML itself), testing the consuming part of the interface. You are able to do all of this before you make a minimal implementation available to run on some server.

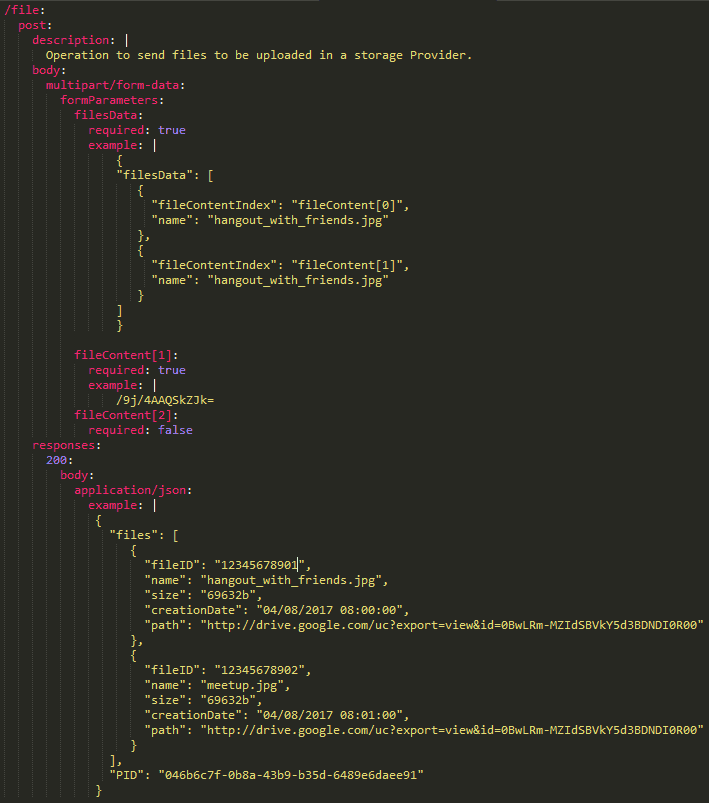

To implement the above-proposed scenario, we define the RAML contract containing the POST operation for the resource “file,” something like:

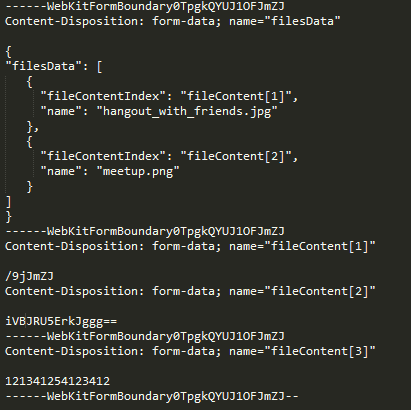

For a resource like this, we can have the following HTTP request example:

Below, I explain the flows that make up the Mule project of the REST API, which is running as Mule 3.8.1 EE runtime:

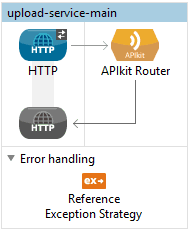

Main Flow:

It consists of an HTTP inbound endpoint configured to handle requests on port 8081, an APIKit Router, and a Reference Exception Strategy.

Post Flow:

The APIKit Router sends HTTP Post requests to this stream. It consists of two triggers (Flow References) for the buildFileData stream and filesSplitter, DataWeave which prepares the response that is returned to the consumer, and a Logger to register on the console that response.

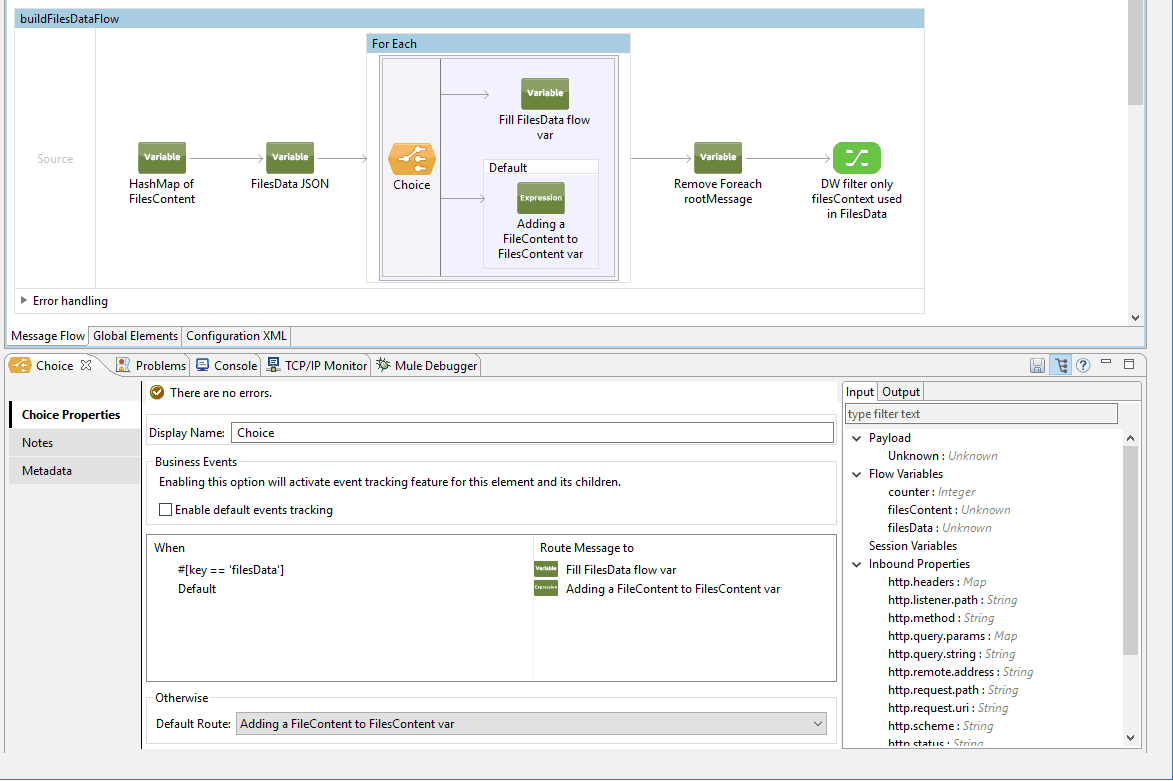

Build FilesData Flow:

Declaration of the FilesContent variables (a Java HashMap) and FilesData (a simple String var). A ForEach traverses the inboundAttachment of the current message (a multipart-formData) to separate the FileContent [] from the filesData. There is also a component to remove the rootMessage variable generated during the interaction of the ForEach.

Lastly, DataWeave performs the merge of the original FilesData Payload with the FilesContent data. This is done here to simplify for the consumer the activity of passing binary data within a JSON. DataWeave also disregards any past FileContent [] that is not declared as used in the “fileContentIndex” field in the received FilesData JSON. This helps filter information and avoids the use of invalid data.

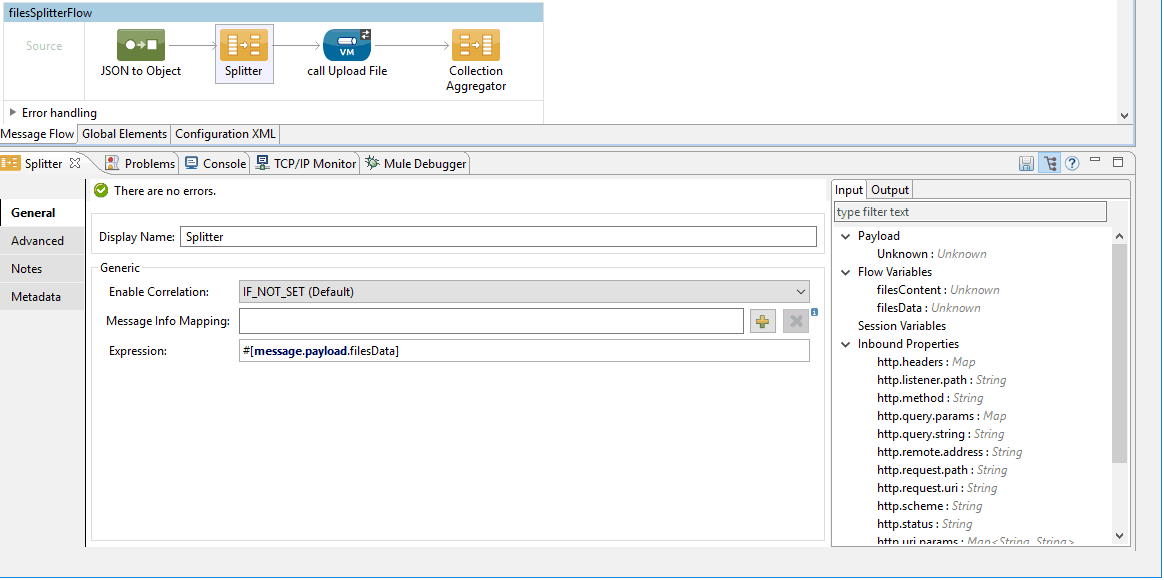

Files Splitter Flow:

This converts the received JSON FilesData to a built-in Mule Java object. This object is partitioned, each part represents a file that will be sent to the outbound VM component (where each file is processed separately). The Collection Aggregator component is responsible for gathering the enriched payloads in the same structure.

Upload File Flow:

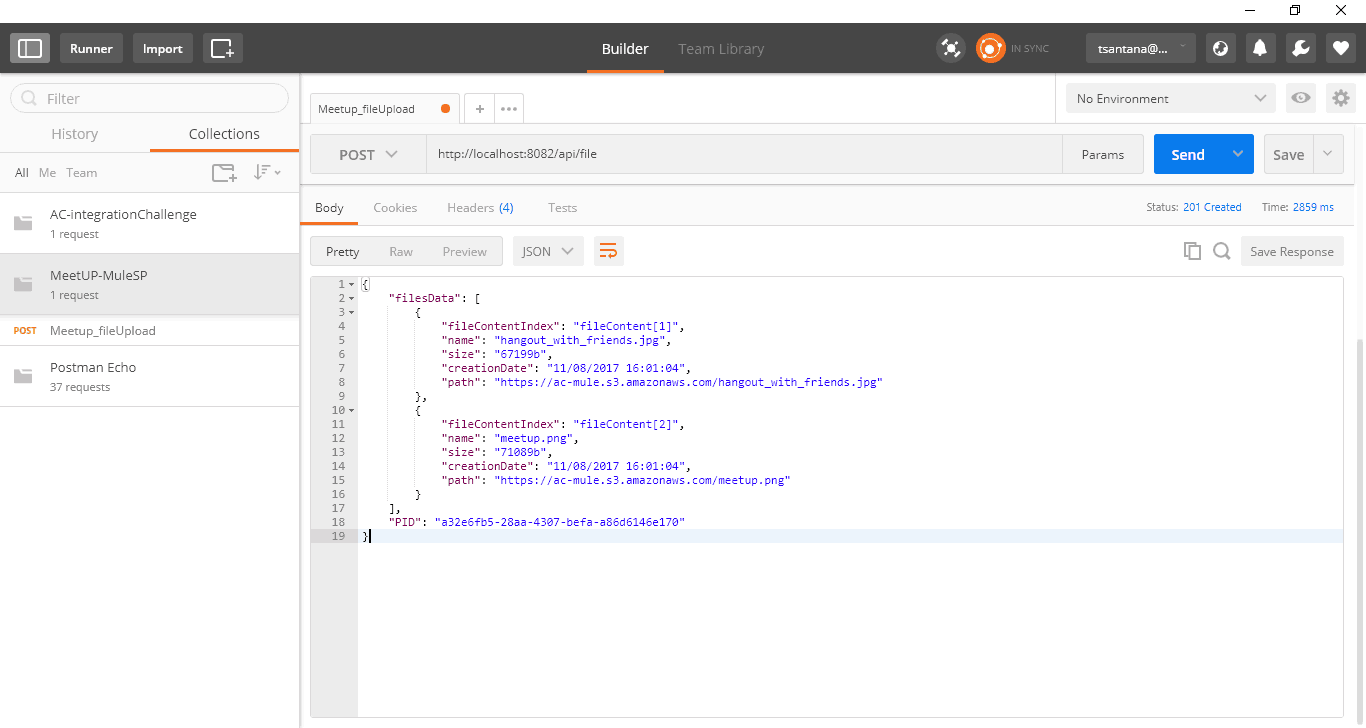

As suggested by the MuleSoft documentation, we can use an inbound VM to handle requests that originate from a Message Splitter. Within this Flow, each file is pre-processed and then sent to Amazon S3 by the Create Object operation. The pre-process consists of converting the Base64 binary String from the “fileContent” field of the payload into a BiteArray. The result of this conversion, which, in this case, is performed by the base64-decoder component, is stored in a variable.

This variable satisfies the Create Object component, along with the file and bucket names. Because this operation doesn’t return a usable response, we have to add a call to Get Object component that allows us to retrieve data from the file that was stored in the storage.

We can complete this step with the help of DataWeave–– the data received at the beginning of this flow is enriched with the FileSize and HttpURI data that was received in the response of the S3 Get Object operation.

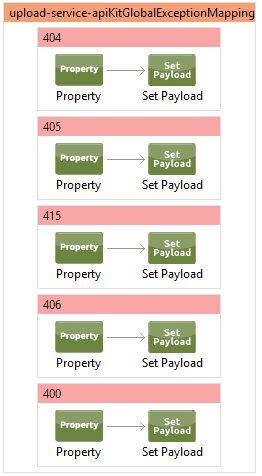

Exception Mapping Flow:

Mule automatically generates this flow when we create a project, providing some RAML file to the APIKit. It offers some exception treatments handled automatically by APIKit.

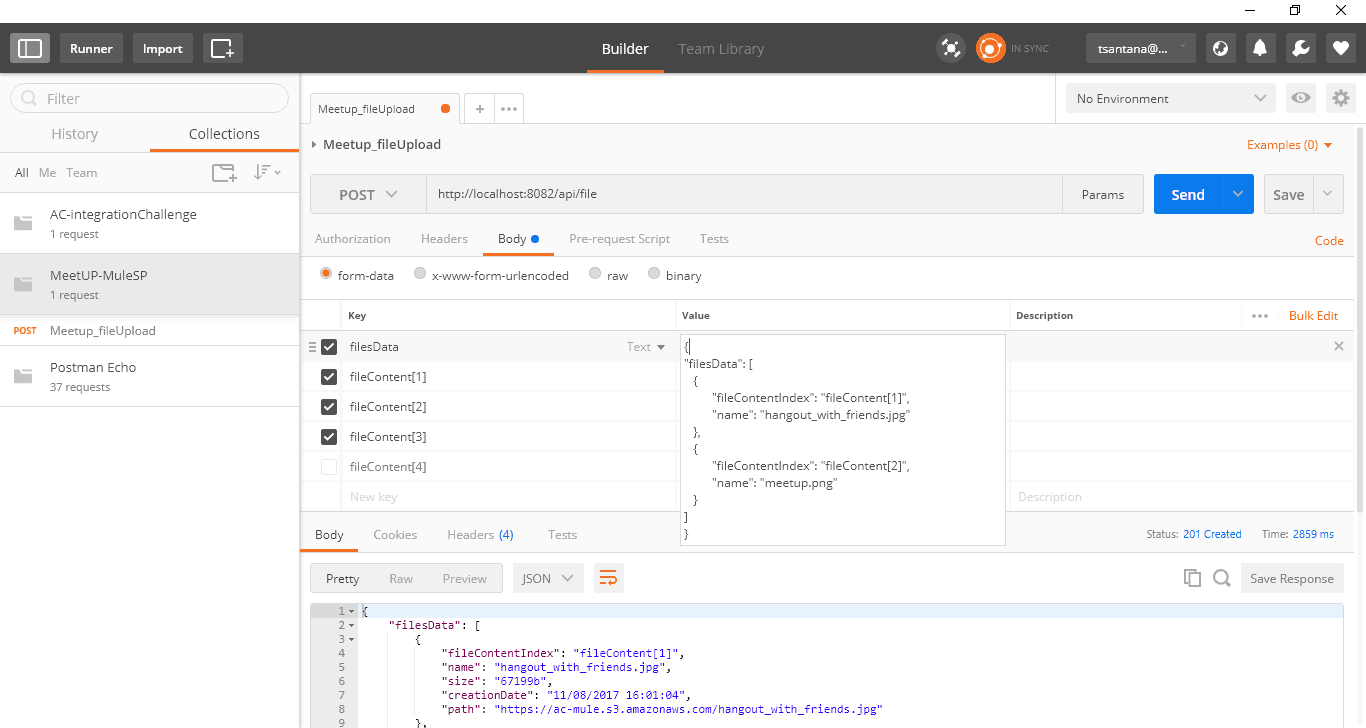

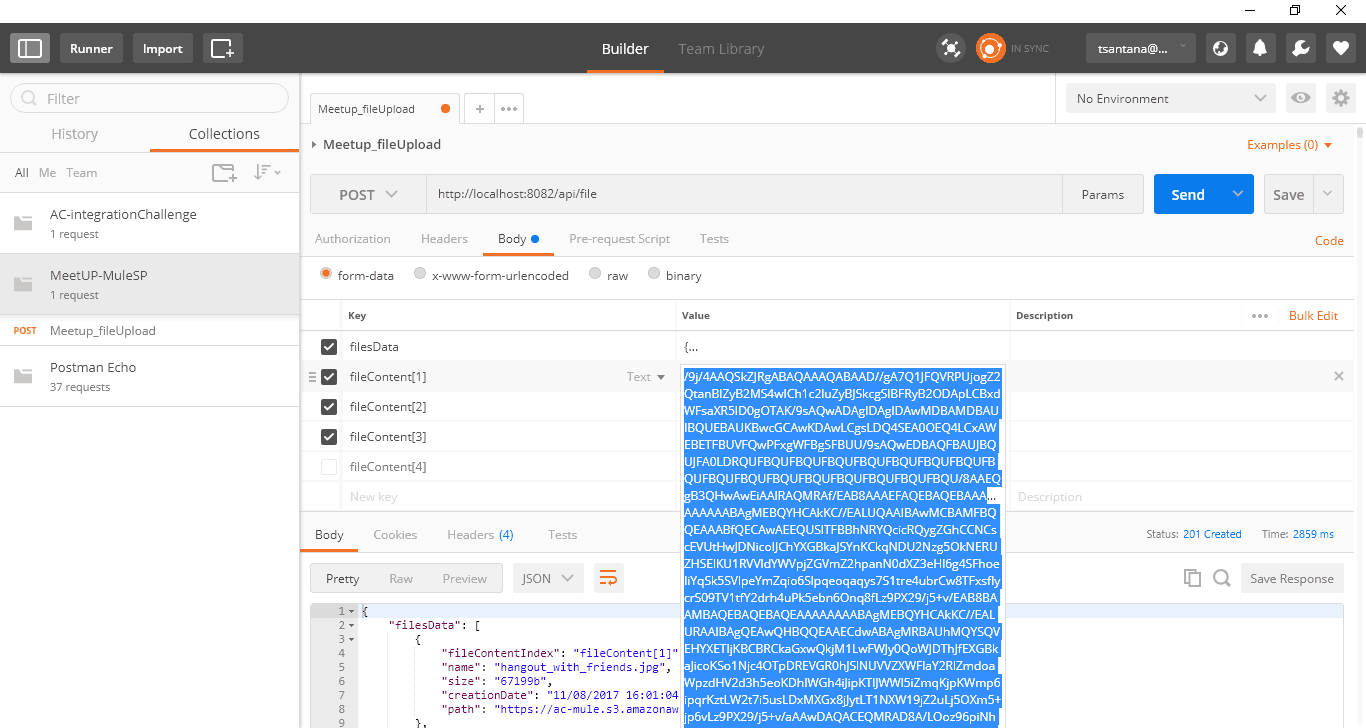

Testing the Solution

Most simple REST API tests, especially those involving GET operations, can easily be done by command line utilities; for example, cURL. Stress tests can be generated with the help of the JMeter tool. Tests can also be performed on newer versions of SoapUI.

Please note that I opted to perform the tests with the help of the Postman tool (an extension for the Google Chrome browser).

You can verify that we can submit more than one fileContent[] as form param, but only the indexes reported as being used in the JSON passed in the form param “filesData” will be processed by API––all others will be ignored. If no exception happens, we receive a JSON containing the processed data and HTTP status 201.

I hope you enjoyed this guide, for relevant content that was cited please refer to the resources below:

References:

- MuleSoft Docs: Splitter Flow Control Reference

- How Does MTOM Work?

- RAML

- Anypoint Connector: Amazon S3

- jMeter Tutorial

- HTTP Scripting

- Getting Started with REST Testing

This blog post first appeared on Dzone.