Microservice Strategy: Not All Services Are Created Equally

At MuleSoft, we regularly have discussions with organizations about the role of our integration and API platform in the context of a microservice architecture.

5 reasons why ESB-led integration is out and API-led integration is in

Integration has been an enterprise challenge for a long time. In order to create the new products, applications, and services that businesses need to

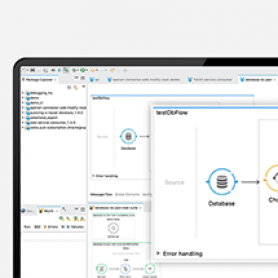

Testing with MuleSoft’s MUnit: Part 2

This tutorial continues from Part 1. Mock is a feature provided by MUnit to mock the behavior of the message processor. Basically, MUnit replaces

Using advanced file filters in File Inbound endpoint

Mule ESB allows you to connect to anything and anywhere using a wide range of connectors and endpoints. File connector is one of the

Microservices versus ESB

This post is by one of our MuleSoft champions, Antonio Abad. Let’s start with a simple definition of both concepts: “An ESB is basically

Weaving it with Dataweave expression

We all know how powerful Dataweave Transform Message component is. This is such a powerful template engine that allows us to transform data to

Enterprise Service Bus vs Traditional SOA

The principles of SOA were sound, it was the implementation that failed. Service-oriented principles should be the underpinning philosophy behind integration; and an enterprise

The top 6 facts about Mule ESB

An Enterprise Service Bus (ESB) is a set of principles and rules for connecting applications together over a bus-like infrastructure. ESB products enable users

Send emails using hMail server with Mule as an ESB

What is hMailServer? hMailServer is a free, open source, e-mail server for Microsoft Windows. It supports the common e-mail protocols like IMAP, SMTP and

API-led connectivity and CQRS: How Mule supports traditional integration tasks

There is a lot of interest in how Mule supports emerging patterns like CQRS (Command Query Responsibility Segregation), so I wanted to create a