In my previous blog post, we explained the different scenarios required to get a VPC in place. But there’s yet another question we need to answer before creating our first VPC. Is it enough with one VPC? How many VPCs do I really need? In this blog post, we’ll review some of the considerations to decide how many VPCs should be included in your network architecture.

Backend location

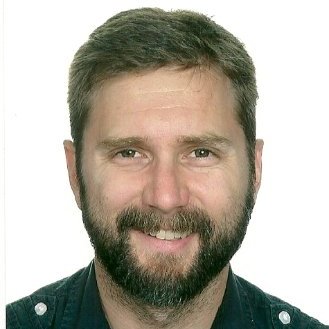

If we are going to be connecting our VPC(s) to our data center to provide your Mule apps with access to your back-end systems, the first question to ask is where the backend is. The Mule VPC will be created in an AWS Region, so create this VPC in the closest region to our backend to minimize the latency in the communications.

However, many organizations don’t have a unique location for their data center. Sometimes data centers are geographically distributed in different on-prem locations or in different regions of a cloud provider. If that’s the case, you have two options:

- Create one VPC and connections to each data center location. In this case, the latency would be different for each region, we’ll need to assess if the furthest region provides an acceptable latency.

- Create one VPC per region close to a data center location and set a connection in each VPC to that data center location. In this case, the latency would be optimal for every region. The caveat here is that apps in different regions will be isolated and not reachable within the local network.

Isolation and traffic segregation

There is another consideration for the number of VPCs needed — the different groups of apps/APIs and the level of isolation required for each group. At this point, we need to talk about business groups and environments.

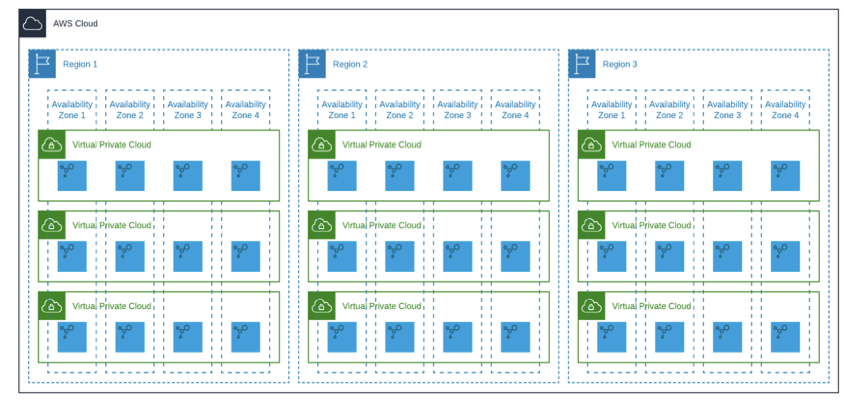

Business groups are a great solution to separate and control access to Anypoint resources. A VPC is a resource that can be created at the master org and also at a business group level. But be careful, the VPC resource can only be shared vertically down, not up or across.

If you don’t have any particular requirement of network isolation between the BGs of your org, create your VPC at the master org level to avoid situations like the previous one. However, there are organizations (usually big companies), made of business units, where a higher level of separation is required, it’s not enough to have a logical division in BGs. In those cases, you’ll need to create as many VPCs as separated business units require this traffic segregation.

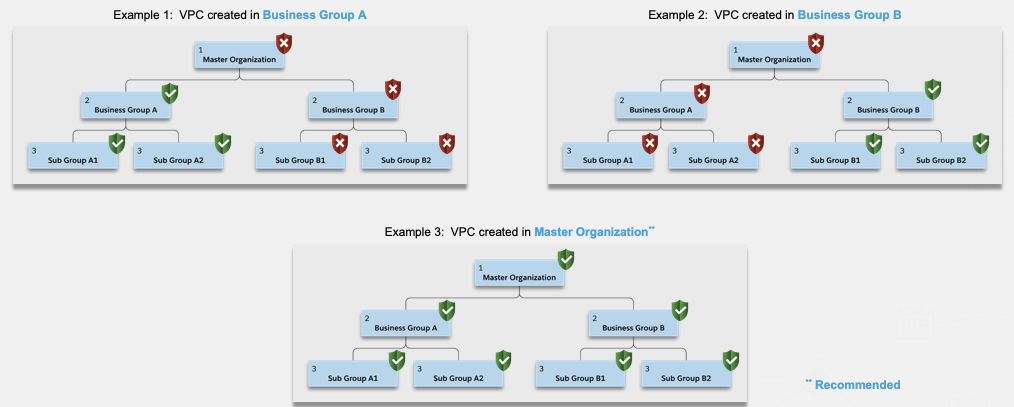

Environments get associated with one VPC and only one. What’s key to understand here is that we can have multiple environments in the same VPC, but each environment can be associated to only one VPC. One environment cannot be part of two VPCs.

This way, every app gets deployed to an environment and that environment is associated with a VPC. We don’t have any settings to link directly an app to a VPC, the app gets hosted to the VPC associated with the environment you’re deploying to.

This provides a mechanism of isolation for our apps. Two environments associated to the same VPC will be logically separated, but they will belong to the same network segment. On the other hand, two environments associated with different VPCs will be completely isolated from each other, because the traffic between environments will be segregated, they are two different network segments.

The recommendation at this regard is to have a minimum of two VPCs. One for production environments and one for non-production environments (dev, QA, stage, test) to segregate traffic between production and non-production. This will guarantee that non-production apps can get access to production data and vice versa. Some organizations enforce a greater level of security and require segregation between all the environments. In that case, we’ll need a VPC per environment.

Anypoint Platform connectivity considerations

If you remember from my previous post, Anypoint Platform offers three connectivity methods to your data center:

- IPSec VPN

- VPC peering to your AWS VPC

- Connection to your AWS Direct Connect location

As we’ve seen earlier in this post, in some cases our data center is geographically distributed or has different locations. In those instances where more than one connection in the same VPC is required we need to pay attention to the following:

VPNs and Direct Connect

We can’t have a VPN and a Direct Connect connection in the same VPC. So, plan ahead your network architecture, if you require a site-to-site VPN connection and another connection to your Direct Connect location. Both connections cannot be within the same VPC, you’ll need two VPCs for that.

Regions in your AWS account

Maybe you’re planning to connect your mule VPC to the VPCs in your AWS account. For that purpose, whether you’re thinking of VPC peering or Direct Connect both types of connections need to be established within the same region of your mule VPC. You can’t have one mule VPC and do VPC peering to two AWS VPCs in different regions. For example, if you have an AWS VPC in Frankfurt and another one in Dublin, you’ll need to create another pair of mule VPC, in the same regions, to do the peering. Same applies to Direct Connect, your Direct Connect location needs to be in the same region as your mule VPC to do the peering.

Subnets

There are no subnets in Mule VPCs. Anypoint VPCs are, behind the scenes, AWS VPCs however this does not mean that our Mule VPCs have the same features as AWS. For example, it is not possible to establish a VPC peering between two Mule VPCs. Also, unlike AWS, subnets don’t exist in Mule VPCs. If you were planning to have two different network segments for isolation purposes, you’ll need two VPCs. You can’t define two network segments within the same Mule VPC as you’d do in AWS. The concept of subnet simply does not exist in Anypoint VPCs.

Isolation between API-led connectivity Layers

You might be wondering if I can’t define subnets how can I keep the traffic between Process and System APIs only internal while the access to Experience APIs is public? That’s still possible using only one VPC and for that you’ll need one or two dedicated load balancers. Have a look at this article in our Help Center to know more about it.