In the past few years, we’ve seen dramatic developments in AI and AI-adjacent technologies, creating more possibilities than ever before. Yet there still seems to be a gap between the need and the demand for artificial intelligence, as well as the rate at which its being implemented within businesses.

Around half of organizations see benefits from using AI to automate IT, business or network processes, including cost savings and efficiencies (54%), improvements in IT or network performance (53%), and better experiences for customers (48%).

AI is complicated. Most AI projects never make it out of the experimental phase since AI requires more than traditional IT development. AI development in a business setting requires its own process. Businesses developing AI try to mimic other forms of development or fail to consider the development process much at all.

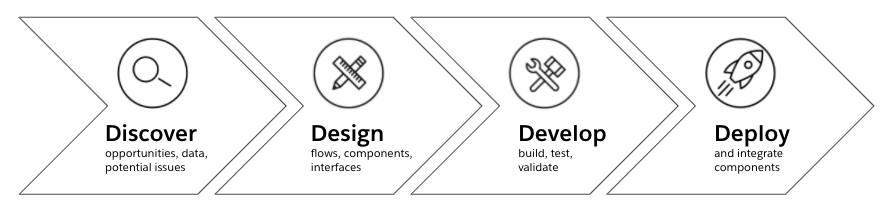

What are the 4 Ds of AI development?

We will address the development process and how some of the principles from my previous posts tie into the development of intelligent systems. This post will lay out the four Ds of AI development: discovery, design, development, and deployment.

1. Discovery

Teams often start by trying to prototype AI models against their data. More so than other forms of technical development, we need to start any AI process with an in-depth discovery process that involves multiple parts of the business. This will ensure that teams avoid building something until there’s a better understanding of whether or not it will solve the problem at hand.

Discovery is an intensive process, but won’t result in much wasted time if an idea doesn’t pan out. As you get further into the four Ds, things become more tailored to the individual solution you’re working on.

Identify the problem

Discovery begins by identifying a problem we need to solve. Discovery can start with two practical questions: “How can we improve our customer experience?” and “How can we improve our employee experience?” These questions are broad by design with an eye towards turning up several potential opportunities for development in general.

Automated intelligence examples could include a product recommendation engine for a retail company, or a text chatbot AI for a business with an online support channel. The possibilities are nearly endless, and can cover all sorts of problems you may be facing. Discovery is best thought of as a funnel, and these two questions form a very broad catch-all.

Qualify ideas

We next need to qualify these opportunities to assess if they are good fits for AI. This qualification is equal parts technical and ethical: “Can we use AI for this opportunity?” and “Should we use AI for this opportunity?” Technical possibility will likely require engaging with a subject matter expert who can look at both the problem and the possible solutions that exist.

You’ll probably find that most, if not all, of the problems you’ve uncovered are technically solved already in some form. If the problem hasn’t been solved, that doesn’t mean it can’t be, it just means that you’ll be investing time and money into research.

The ethical filter can be a little more complex but just as vital. Running a consequence scanning workshop is a way to filter out potentially dangerous ideas. Failing to consider the implications of your output has resulted in all sorts of nasty accidents with AI models that don’t need to be rehashed here. Suffice it to say, be extremely thorough to consider possible ethical implications and filter your opportunities carefully through an ethical lens.

Analyze data

By this point in discovery, you’ve narrowed down your list of problems to things that are valuable to your business, technically possible, and ethically positive. The final piece of discovery is an in-depth analysis of your data to make sure you have the right data to address the problem.

This filter doesn’t necessarily remove opportunities, but creates a filter to prevent you from initiating development on something you won’t be able to finish. Where the other filters provide a hard “no” to development, looking at your data provides a “wait” response. You can always collect or buy data to approach your problem — the question is whether or not you want to go through that process.

2. Design

Now that we’ve discovered problems that we want to solve, can solve, and should solve, we start building right? Well not so fast. Jumping straight into building can result in functional models, but not models that are integrated into the business. Solving the problem is only half the battle, making that solution usable requires design work.

Establish API-led architecture

Designing these systems is more about figuring out how this model will integrate than it is about the actual model itself. On the architecture side, how what will you need to integrate AI into your stack? What systems does it need to access? What systems need to access it?

One of the big challenges AI practitioners face is integrating their finished models into the wider business application network. By deploying API-enabled models, you can treat these models as lego bricks from which you can build any number of larger solutions within the business. At a high level, this requires you to define interfaces between systems, and build smaller AI applications that you can compose together.

Define interfaces between models

Defining interfaces between these models provides us with significant benefits in the development stages. Figure out what data you want to pass to your model, and what data your model will send out before building your model.

Just like in traditional development, these interfaces may change as development progresses, but at least attempting to define those interfaces up front will allow you to start evangelizing these models within the business and externally. Feedback is critical, and getting to that feedback as quickly as possible is even more important.

3. Development

Development for different model types will progress differently. Generally, statistical models will progress faster and require less engineering effort, while models that fall further into the AI space like neural networks or deep learning will develop slowly and require significant engineering effort to deploy.

Interface specifications

Publishing interface specifications into the organization will enable parallel development from engineering teams. One of the biggest roadblocks to AI adoption is integrating the models these teams produce into the business. Publishing these API specifications prior to deployment allows for development to progress simultaneously elsewhere in the IT organization, thus reducing your time to market with these types of solutions.

Test the model

Another important aspect of the AI development process is fairly narrow to just this space. Once your AI team has assembled a model that they think works, you should be cautious with deploying it into production. While you may do this for regular software projects, you can generally catch and fix bugs within those projects quickly.

AI models may not display bugs until much further down the line. Publish the model into a sandbox and watch it carefully over a longer period of time to make sure the results are rational. Statistical models won’t need nearly as much oversight as models that learn (such as neural networks), but we still recommend it as a precautionary measure. This allows you to produce multiple candidate models with the defined interface and test them all at once before deploying your best one. Oversight should continue after deployment to production, but you shouldn’t be blindsided by results it produces.

4. Deployment

Our last and possibly most overlooked step is deployment. If you’ve followed along with the process this far, the model you’ve developed should be in pretty good shape. Ideally, you’ve designed interfaces for your model to communicate with. This model has been tested and observed for bugs over a longer period of time and you’re certain it’ll solve a business problem. Development externally has also progressed, and now all you need to do is plug the model in.

Leverage API-based abstraction

This is where the value of APIs becomes directly apparent. No matter how you choose to deploy your model, APIs abstract interaction with other systems away from the model itself. It allows you to protect your model from garbage in, and allows strict expectations for what the model should put out.

It allows for integration testing that doesn’t require the actual execution of the model. Most importantly, APIs allow you to swap out your model with a different implementation down the road should you discover that your model isn’t meeting expectations.

Regardless of how you choose to deploy your model, using APIs for communication between your model and other systems makes things simpler for your development teams and will reduce your time to market with these types of solutions.

Repeat

Once you’ve released a model, congratulations! You get to restart this process! Make sure you treat these models as building blocks. A model you use in one place within your business may have uses elsewhere. Composing these models to non-AI systems can also augment your data in ways you may not have considered initially to provide additional value. Best of luck on your artificial intelligence journey.