In my previous blog, I wrote about Kerberos support in MuleSoft, so it seems only natural to discuss the other place where Kerberos fits into the API ecosystem. Not only can Kerberos be used to secure APIs hosted or proxied by MuleSoft, but certain third-party systems can use or even require Kerberos for their security.

The extensive library of connectors available on the Anypoint Exchange provides support for a variety of authentication protocols as required by the third-party systems, those that provide out-of-the-box Kerberos support are listed below.

CloudHub

Before we get to the connections, it is important to note that should one wish to use these connectors on CloudHub, certain prerequisites must be met:

- The DNS must be configured correctly on the CloudHub VPC, as Kerberos uses fully qualified hostnames to ensure hosts are identified correctly.

- The properties must be added to the configuration of the CloudHub workers, as they are not in the Windows Domain (or Kerberos Realm):

- java.security.krb5.kdc=<kdc server or windows domain server>

- java.security.krb5.realm=<kerberos realm or windows domain>

Microsoft connectors

Microsoft SQL Database

Ok, this one is a bit of a cheat, MuleSoft provides Kerberos support for MS SQL via the MS SQL JDBC Driver with version 6.2 or greater. In MuleSoft, we can use the “Generic Database Connector” configuration and in the JDBC URL, we enter our URL in the following format:

jdbc:sqlserver://<SERVERNAME>;database=<DBNAME>;authenticationScheme=JavaKerberos;integratedSecurity=true;userName=<USER>;password=<PASS>

With Kerberos, we need to ensure we use a fully qualified hostname value for the server name so that the Service Principal Names (SPNs) match on the Active Directory (AD) server. Finally, replace <DBNAME> with the database name and replace the username/password credentials.

Microsoft Dynamics CRM

Support for Kerberos is available in the Microsoft Dynamics CRM connector as per here.

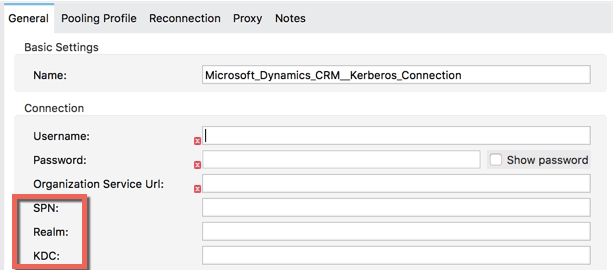

The Dynamics CRM connector always requires a username, password, and organization service URL regardless of the authentication mechanic; however, if you choose the Kerberos option then some additional parameters are required––namely SPN, Realm, and KDC:

As per the connector guide, there is an auto-discovery component to these values if the server hosting Mule runtime is on the Domain. However, if you are running this in CloudHub, you will need to enter the values manually as per the guide here.

Non-Microsoft Connectors

Common issues pop up with customers that want to connect to Apache Kafka or Hadoop using Kerberos-based authentication.

Kafka

The connector documentation walks through the setup of Kerberos for Kafka, including the properties files required to configure the connector, this can be found in bullet 12 of this guide.

However, additional parameters are required to run the connector on a CloudHub worker, for Kafka these parameters are supplied in the form of a JAAS conf file.

This file will need to be pulled into the Mule project with an entry in the Mule application XML as follows:

<jaas:security-manager><jaas:security-provider name="jaasSecurityProvider" loginContextName="KafkaClient" loginConfig="jaas.conf" />jaas:security-manager>

The jaas.conf file can then be placed in the src/main/resources folder and populated with the following entries, replacing the <APPNAME> and <REALM> values with ones appropriate for your environment:

KafkaClient {

com.sun.security.auth.module.Krb5LoginModule required

useKeyTab=true

keyTab="/opt/mule/mule-CURRENT/apps/<APPNAME>/classes/<keytab file>"

storeKey=true

useTicketCache=false

serviceName="kafka"

principal="kafka/<REALM>";

};

Hadoop

The only option when running Hadoop in “secure mode” is to use Kerberos-based authentication. Stay tuned, because we will cover this topic in the next article!