Asynchronous messaging enables applications to decouple from one another to improve performance, scalability, and reliability. This post will review the most common messaging patterns, along with why and when to use them.

We’ll cover the following patterns:

- Publish/subscribe – publishers publish messages and a copy of each message is sent to any number of subscribers.

- Message queue – many consumers, but any message goes to (at most) one subscriber.

- Many-to-one – only one consumer gets all messages.

- Request/reply – after a consumer processes a message, it sends a reply to another channel so the producer/publisher can find the results.

There are a lot of different terms for these things used by different message brokers, so I’ll try to clear up that confusion too. I won’t go into the details of how the brokers work under the hood in this post, as it varies depending on what technology you are using.

Publish/subscribe

aka: many-to-many, one-to-many, fan-out, broadcast.

The publish/subscribe pattern is where an application publishes messages (publisher) and any number of other services will receive them (subscribers). Applications that are interested in a publisher’s messages “subscribe” to a predefined channel that they know publishers will be sending messages to.

Use this pattern when multiple things need to happen for each event. For instance, when a user performs an action (submits a form), and you need to update several databases, send notifications, update other APIs, etc.

This pattern also allows you to add new functionality without interrupting or changing existing code. New applications can simply subscribe to the channel to receive the messages and perform new functions.

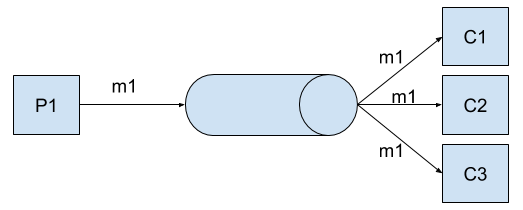

Publisher P1 sends message m1 to a channel. All subscribers (C1, C2, C3) will receive m1.

Example use cases:

Example 1: a user makes a purchase in an online store.

- Order event is published and user sees a message that says their order is submitted for processing.

- Inventory system also gets the event message and puts the item on hold while waiting for the charge to go through to prevent someone else from buying it.

- Payment processing attempts to charge the user’s credit card.

- Email system sends email to user saying “Your order is processing.”

- Analytics system updates the stores analytics.

Example 2: an IOT device collects measurements — a thermometer.

- Thermometer publishes a temperature measurement every few seconds.

- Reporting system subscribes to the temperature events and on each event, it updates a few metrics in the reporting/dashboard database.

- A thermostat service adjusts the target temperature based on the new data.

- An alerting service aggregates the temperature readings in a time window, and if the temperature stays too high for too long, an alert event is published.

Message broker support

This is a very common pattern which most modern message brokers support in some fashion.

- RabbitMQ: Publish/Subscribe

- ActiveMQ: Broadcast

- Kafka: Topics (topics are always multi-subscriber)

- NATS: Publish-subscribe

- AWS SQS/SNS: Pub/sub via SNS

- Azure Message Bus: Topics and Subscriptions

- Google Pub/Sub: Publisher-Subscriber

- Anypoint MQ: Message Exchange

Message queues

aka: Work Queues, Message Groups, Consumer Groups, Queue Groups, Competing Consumers, Point-to-Point Channels.

The message queue pattern is very common and is, essentially, what message queues were built for. Producers push messages onto a queue and consumers take those messages off the queue. Only one consumer gets a particular message, no matter how many consumers are asking for messages from that queue.

Message queues are great for distributing processing across multiple machines. Typically these “worker” machines only have one job and that is to process messages off queues. This has many benefits such as taking load off front-end servers, enabling massive horizontal scaling and elegantly handling load spikes. If there aren’t enough machines to process all the messages, the messages remain in the queue until a consumer can process it. To scale out, simply add more worker machines.

Another important difference between this and pub/sub is that the consumer of a message from a queue must acknowledge (ACK) that processing of that message is complete. If the consumer does not ACK the message after some time, the message queue service will think it failed and will requeue the message so another consumer can process it. Because of this, it is important to ensure that your processing is idempotent since it’s possible you may process a message (or partially process a message) more than once if a worker server fails during processing.

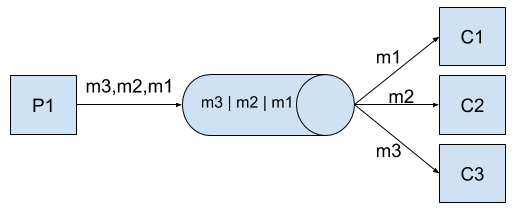

Publisher P1 sends messages to a queue. Consumers C1-C3 receive separate messages from the queue and none of them receive the same message (unless there is a failure or a retry where the message will be requeued).

Example use case:

Users upload images to a photo sharing site and those images need to be processed into various sizes and formats.

- The image processing would have multiple machines running “worker” processes to process images as fast as possible.

- Any machine can take the message (aka: the “job”) and process it, but only one of them processes any particular image.

- The image is resized into various sizes.

- The image is converted into various formats.

- Some filters are applied such as grayscale or color pop.

- All the new images are stored in file storage, such as S3, for use by other systems and to display in the web and mobile applications.

- The service would then send an acknowledgement back to the message queue service to let them know that message has been processed.

Message broker support

- RabbitMQ: Work Queues

- ActiveMQ: Message Groups

- Kafka: Consumer Groups

- NATS: Queue Groups

- AWS SQS/SNS: SQS

- Azure Message Bus: Queues

- Google Pub/Sub: via “Pull”

- Anypoint MQ: Queue

Many-to-one

aka: exclusive consumer, fan-in.

When you must be sure that only one consumer can get the messages on a particular channel, this is the pattern you’ll use. This isn’t a very common pattern as there are few use cases for it, but the main one would be if a particular consumer must store state between messages, or if messages must be processed in exactly the right order.

Note: It’s usually best if you can architect your system in a way where this isn’t a requirement since it severely limits your ability to scale. It’s also not supported by many brokers.

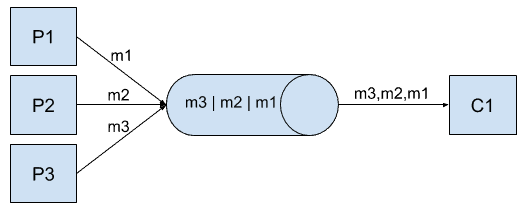

Producer P1 (can be more than one) produces messages. Only one consumer, C1, receives messages. Other consumers will be rejected.

Example use case:

An auction bidding system is a good use case for this. All bids must be processed to ensure a bid doesn’t win if someone else beat them to it or came in slightly higher.

- Initial bid is placed on an item for $1.

- Then two bids get submitted for $2.

- If the two second bids were to go to two different consumers, they would both process the bid and you’d have a conflict. Who won the $2 bid?

- With exclusive consumer, both bids would go to the same consumer and the consumer would reject one of the bids, so you only have one winner.

Message broker support

This is a very common pattern which most modern message brokers support in some fashion.

- RabbitMQ: Exclusivity

- ActiveMQ: Exclusive Consumer

Request-reply

Send a message, receive a reply. Sounds a lot like a synchronous system such as a REST API and you wouldn’t be wrong for thinking that. The general idea is that the publisher includes a destination for a consumer to publish another message with the reply/response. This would typically be another channel/topic, but could also be an HTTP URL (webhook) or something else, depending on the system.

While not as common as the other patterns, there are some cases where this is useful, such as when the response may take some time to complete or if it’s unknown which machine will process the message. For instance, a user submits a form where the processing may take a few minutes. The message includes the channel the browser will listen to for a response. When a worker machine in a processing cluster ends up completing the work, it will publish the results in a message to the reply channel, the browser will receive the results message, and will display the information to the user.

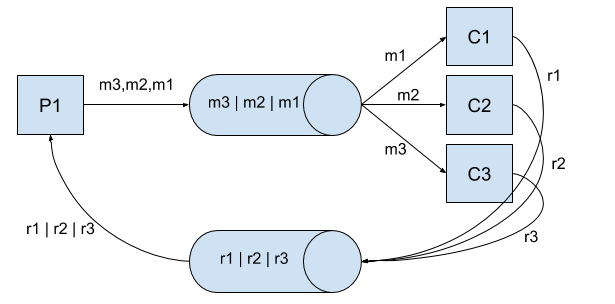

Publisher P1 sends message m1 onto channel chan1. Consumer C1 receives message, processes it, then sends a reply message rm1 to chan2. P1 receives rm1.

Example use case:

User uploads a video to a video publishing site, like YouTube.

- User submits form with video attached.

- Video is uploaded.

- The video site sends a message to a queue to process the video.

- A worker gets the message and converts the video into an optimized format. This is a heavy process that takes several minutes.

- Once the worker has completed the job, it sends a message to the reply queue with the URL of the new, optimized video.

- The browser receives this message and displays the video to the user.

Message broker support

Not many brokers support this natively, but you can achieve this with any broker if you do it yourself following the same pattern. Just include the reply channel/topic in your messages and have your consumers push the results back to that channel/topic.

- RabbitMQ: RPC

- NATS: Request-Reply

Conclusion

In this post, we’ve covered the major asynchronous messaging patterns. While these patterns are sometimes called different things, now you know what they mean and which brokers support them. Hopefully we can all agree on some common terminology at some point. And better yet, how about a common interface to use them all like we have with REST APIs. Imagine that?

For more information on messaging patterns, read about the top five data integration patterns.