It’s pretty common to hear and read about how everything in the IT business is going “as a service…”. So you start hearing about Software as a Service (SaaS), Platform as a Serivce (PaaS) and even Integration Platform as a Service (iPaaS, which is where our very own CloudHub platform plays on). But what about data?

APIs, they’re everywhere

If you’re an avid reader of this blog, you probably read countless posts about how APIs are everywhere, making the integration of cloud services possible. Sometimes those APIs expose services and behavior, like when Facebook API let’s you change your status or when the Box API let’s you store a file. But what happens in the cases when I just plain and simply want to expose data? What if I don’t need to expose explicit behavior such as Facebook does when sending a friendship request? What if for me, allowing to query and optionally modify my data is enough?

For example, consider President’s Obama Open Data Policy. In case you’re not aware of it, President Obama ordered all government public information to be openly available in a machine readable format. That’s A LOT of data feeds to publish. Let’s make a quick list of things government’s IT officials would need to carry this out:

- APIs: In order to consume these feeds, there has to be a way to connect to them. Just publishing government’s data bases out in the Internet wouldn’t work for many reasons (from security to scalability). Also, some level of communication/scalability/governance layer is necessary.

- Standarization: With so many feeds to publish, a common stardard consumption is required. You don’t want to build and maintain a different infrastructure per each feed.

- Compatible: It should be easy for existing systems to interact with these feeds

All of the above, is what OData stands for. Initially created by Microsoft but then opened to the public, OData is a REST based protocol that defines a standard way to expose/consume data feeds. Along its features we can mention:

- REST based

- Compatible with ATOM and JSON

- Metadata support to discover data catalogs

- Query language including aggregation functions

- Full CRUD capabilities

- Batch processing

Open Data Policy is just the tip of the iceberg. Many governments all around the world are taking on similar initiatives. In case you feel that government data is a little bit out of the ordinary compared to your average day at work, let’s take a look at other services that use OData:

- Microsoft Dynamics CRM uses OData to expose its data catalog. You can query and modify its data and even execute some functionality using navigations.

- Microsoft Azure uses OData to expose table information

- Splunk: This Big Data company let’s you integrate through a OData API

- Netflix & Ebay: Although recently deactivated, these two where using OData to allow remote queries to their databases.

Where does Mule fit in?

Well, as usually we have a connector for it. Since OData is a standard protocol, we were able to develop a OData connector that will let you into any service using it. As of today, the connector supports:

- V1 and V2 protocol specifications

- All CRUD set of operations, including search functions

- ATOM and JSON feeds

- Batch operations

- Marshalling / Unmarshalling to your own Pojo model

A quick demo

Although the goal of this post is not to dive deep into the connector, let’s take a quick look at the connector’s demo app just to illustrate how it works. This app consumes the OData feed from the city of Medicine Hat in Alberta, Canada. It’s basically an OData API listing public information such a list of the city buildings. So, let’s see how to consume that!

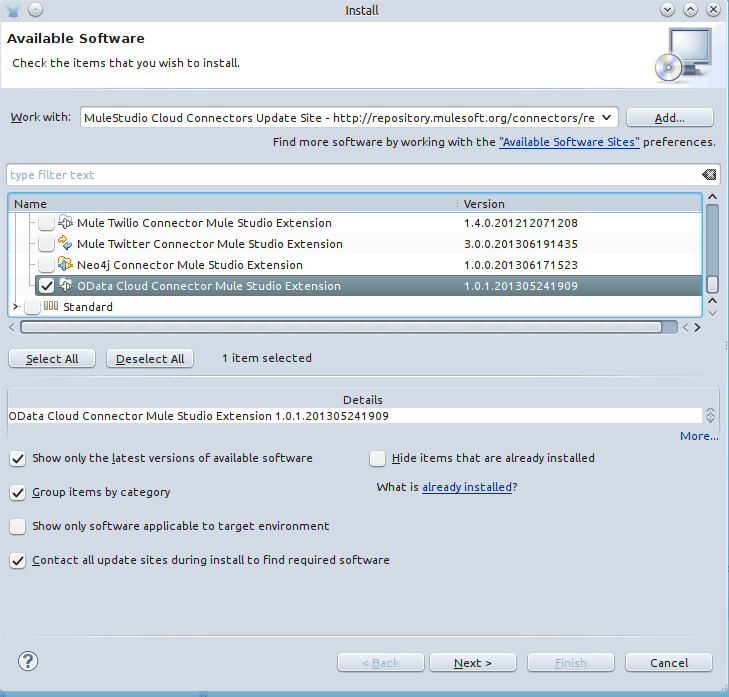

First, open up Mule Studio and install it from the Cloud Connectors update site:

Then, let’s a start a flow with an http inbound endpoint. It’s configuration should look like this:

Then, drop the OData connector into the canvas. First, create the connector’s config:

Notice that the V1 and ATOM were selected as protocol version and format merely because that’s what the team at medicine hat used.

Once the config is created, use the Get Entities operation to retrieve all the buildings in the city:

In the screen above, you can see how the CityBuildings catalog was selected for querying and how you can add filters and projections to this query (although we won’t be showing that in this demo). Also, notice that we’re specifying a class as a return type. If not provided, then the connector will return an object model that represents the OData model. That is good but not really easy to work with. By being able to specify your own return type, you can easily make an object that carries the info you need and that is easier to integrate with other components such as DataMapper. In this case, our object looks like this:

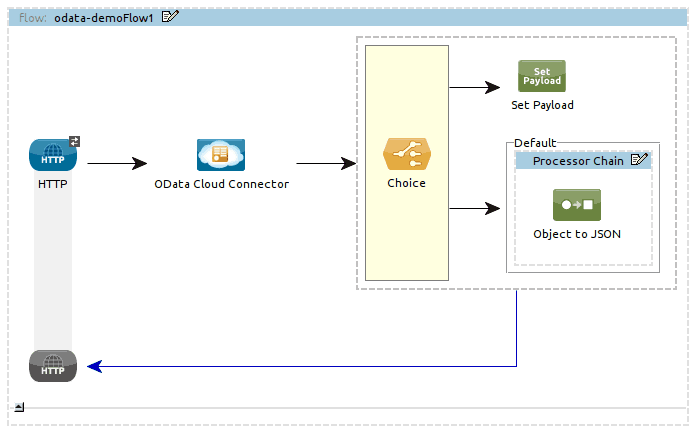

Finally, we just add a Choice Router so that if no results came back we show a message saying so. If results were indeed found, then we transform the results to JSON format and print on the browser. This is how the final flow looks like:

And this is how the Mule XML config looks like:

That’s it! Try and enjoy!

Additional resources

Here’s a couple of helpful links:

- The OData page

- The Medicine Hat City feed

- Source code for the OData connector and the sample app shown in this post