API management is the process of designing, publishing, documenting, and analyzing APIs in a secure environment. It provides users — like developers and partners — the ability to access an API, governed by a set of configurable policies.

In this post, we will take a closer look at the benefits of using the basic endpoint approach to deploy a packaged API implementation along with a proxy as a self-contained application and examine the required steps for the API pairing within API Manager using the API autodiscovery concept.

Governing APIs

To apply governance policies, such as security, throttling, and quality of service to an API, we need to create a proxy using the API Manager and deploy it to Mule runtime engine that contains a gatekeeper that controls access to the API. To achieve this, three deployment scenarios are possible:

- Proxy endpoint: We create a proxy that sits in front of the API and is used to control access to the API implementation that can either run inside or outside of Mule, as illustrated in figure 1.

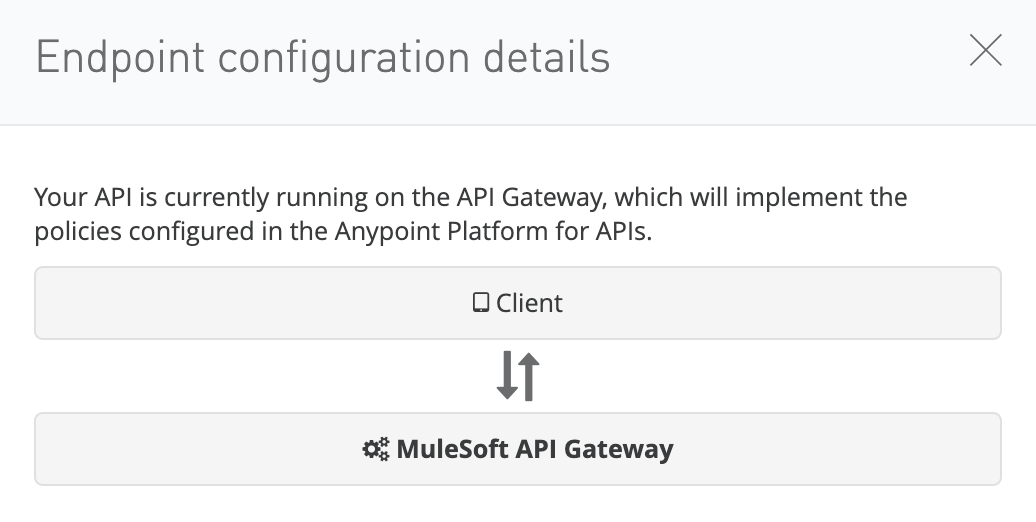

- Basic endpoint: We configure the API Manager to use the deployed API embedded with required policies, as illustrated in figure 2.

- Service Mesh: Organizations may already have a non-Mule microservices architecture orchestrated with Kubernetes. In which case, they can manage API policies for those APIs from the Anypoint Platform control plane via the API Manager using Anypoint Service Mesh, as illustrated in figure 3.

Proxy endpoint vs. basic endpoint

Basic endpoint

When the API implementation is deployed to Mule, the basic endpoint configuration is the recommended approach as it requires less resource consumption. This is because the API is a self-contained packaged application that includes both the service implementation as well as the components needed for access control to the API. The result is that it requires one worker (in the case of CloudHub) which contains the dedicated instance of the application and Mule.

Proxy endpoint

The proxy endpoint requires one dedicated CloudHub worker to host the proxy application and a second dedicated worker to host the API implementation. This configuration may not be ideal — but is justifiable for existing Mule API and non Mule API implementations that require access control via policy enforcement.

The most common use for the proxy endpoint is to proxy applications running on a customer-hosted environment or non-Mule applications. These non-Mule applications may be web services or microservices that the organization may or may not control. Either way, the organization wants to control and monitor access to those applications using the API Manager.

How to configure a basic endpoint

In the following sections, we’ll demonstrate how an API can be built as a self-contained API packaged to fulfill the requirements of the embedded API management as well as the API implementation using the basic endpoint configuration.

Configure an API instance and autodiscovery

API autodiscovery is a concept, used to pair an API instance defined in the API Manager with a deployed Mule application. Once paired, we can configure and manage policies as well as view API analytics from within API Manager.

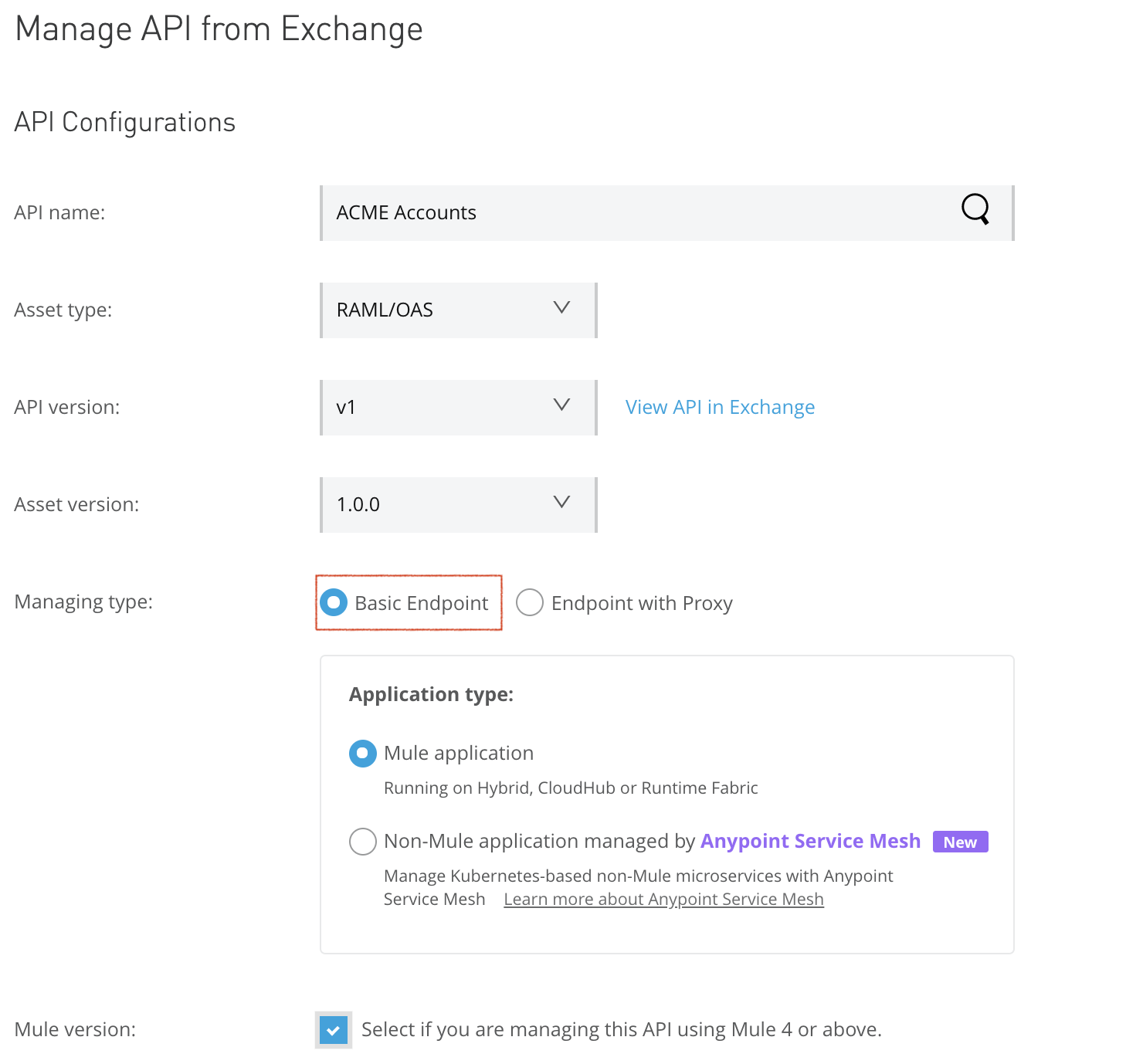

To configure the API instance, import the API specification from Exchange or from a local machine to make it available within the API Manager by selecting the basic endpoint configuration option.

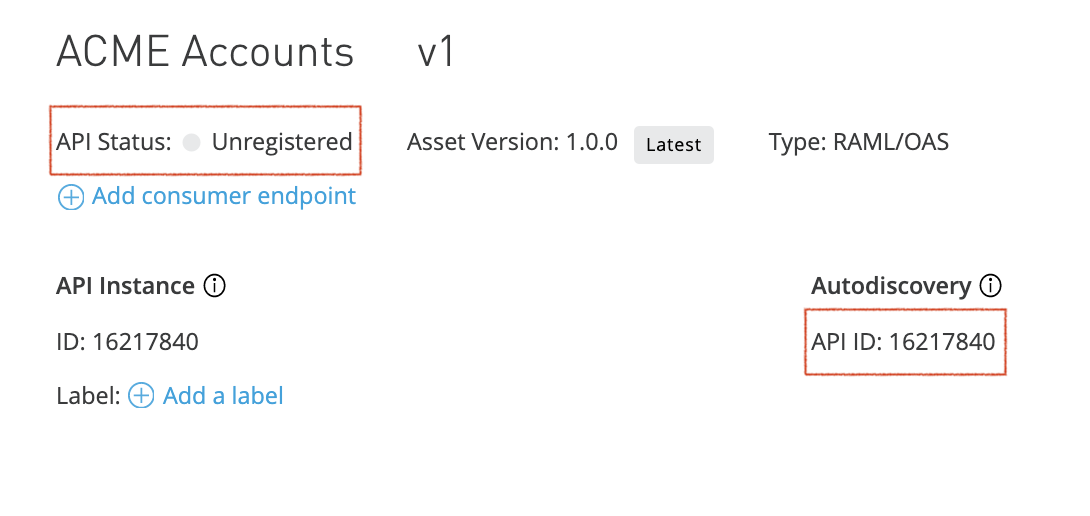

This configuration will manage a published API via API Manager. API Manager generates a unique API instance ID for that API version and sets its status to unregistered. This API instance ID is used by the autodiscovery feature in the Mule application to pair with the API instance managed by the API Manager.

Figure 5 illustrates an API instance managed by the API Manager and how the autodiscovery ID matches the API instance ID. Initially, the instance is shown as “unregistered.”

Deploy the Mule application

To complete the API pairing process for the unregistered API instance, we need to deploy the corresponding API implementation. The Mule application’s global element “API autodiscovery” must be configured with the API’s autodiscovery ID for the pairing to trigger during deployment. To configure and deploy the API implementation the following steps should be followed:

- Configure Anypoint Studio with Anypoint Platform credentials.

Using Anypoint Studio Preferences Window, click on authentication, then add your Anypoint Platform username/password account as illustrated in figure 6.

- Retrieve Anypoint Platform client ID and client secret.

In this step, you may use your organization or a business group client ID and client secret in which your deployment environment is configured. However, as a best practice, use the deployment environment credentials.

To do so, log in to Anypoint Platform, navigate to access management, then to environments, then select the environment, and copy client ID and client secret as illustrated in figure 7.

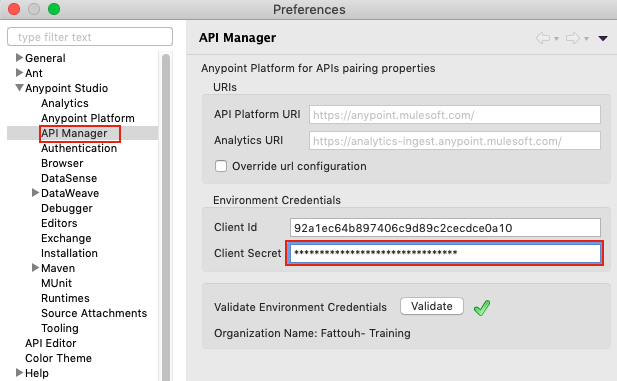

- Add the client ID and client secret in Anypoint Studio.

Open Preferences Window, click on API Manager, add client ID and client secret using the corresponding fields as illustrated in figure 8.

- Create the Mule application in Anypoint Studio and configure global element “API autodiscovery” using autodiscovery ID.

Create a Mule application in Anypoint Studio. Add global elements API autodiscovery with the API instance ID created for API in “API Manager,” as shown in figure 9.

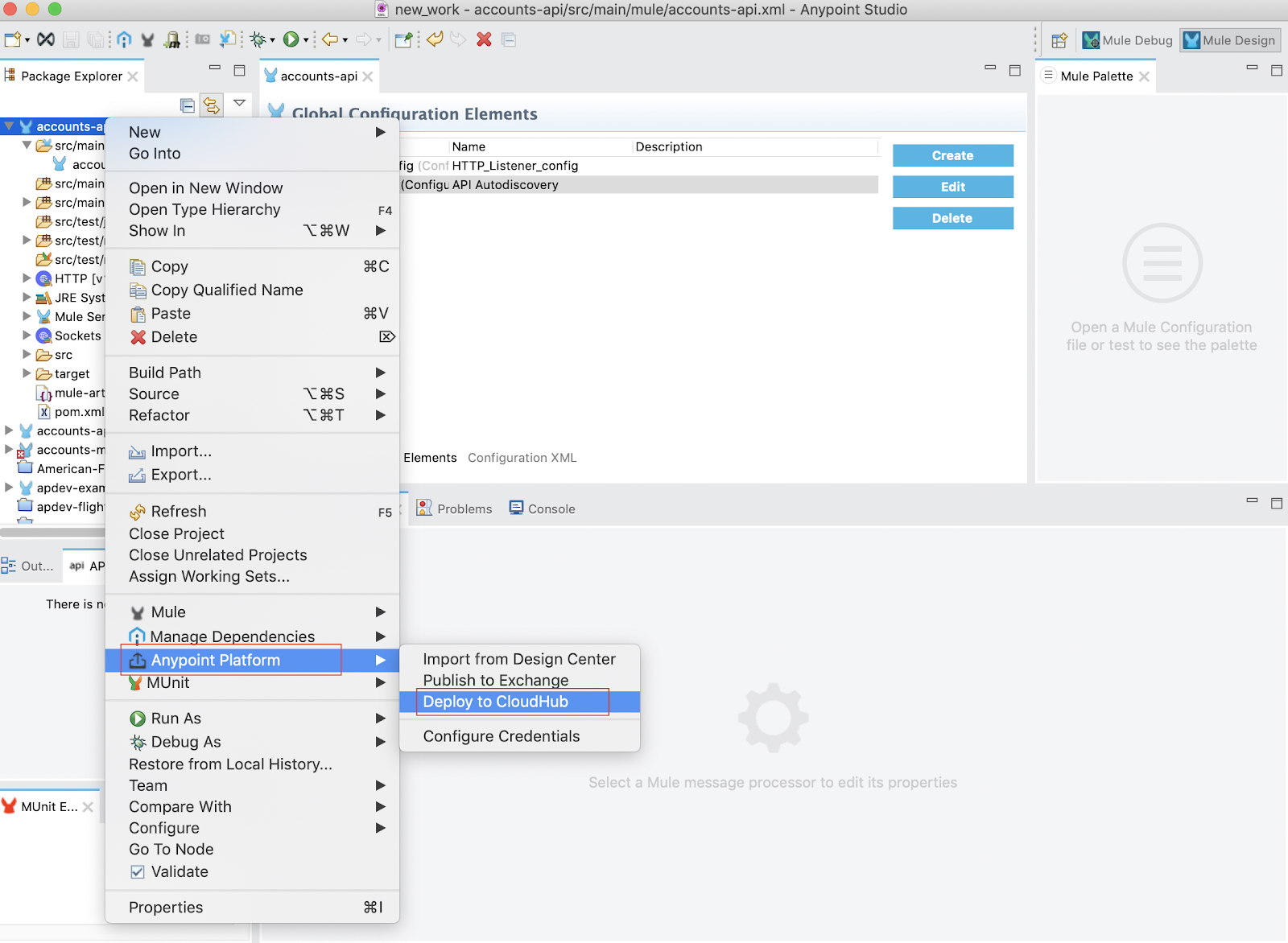

- Deploy the application to CloudHub.

Deploy the application from Anypoint Studio. As an example, the getAccounts flow contains a stub that returns a message when the flow is triggered using an HTTP listener because the purpose is to test the API pairing. In a real application, we may create the API interface using the APIKit tool that uses RAML specification created during the API design phase of the API lifecycle and implement the application logic for accounts with respect to the API specification as outlined by the RAML file.

To deploy the application, right-click the project in package explorer, then to Anypoint Platform, and then deploy to CloudHub as illustrated in figure 10:

The deployment’s success can be verified by viewing the application and system logs from within Runtime Manager. Once the application has started, you will see in the API Manager the API status changed from unregistered to registered. This shows that the API pairing operation was completed successfully and that API management tasks can be performed using available policies from API Manager.

Summary

In this post, we introduced API management and the API autodiscovery concept to deploy a Mule API using the basic endpoint configuration approach. This configuration is used in the case of an API implementation running in Mule on CloudHub.

If you would like to learn more consider taking the API management course or the Production Ready Development Practices course. Or check out this video on API policy enforcement.