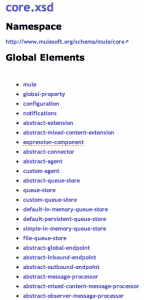

Introduction

The architecture of Mule is driven by the principles of Industrial Best Practice as outlined in the well-known Enterprise Integration Patterns which have identified the most common building blocks for every integration problem. These building blocks are what make up Mule Flows, the executable units inside Mule Applications. No matter what the problem, wiring them together into an integration solution is extremely easy and by exploiting the power of Mule’s native support for the Drools Rules Engine, the Integration Developer has a very powerful set of tools to tackle even the most complex of integration problems with the greatest of ease. With this post I hope to be able to demonstrate this to you!

Use Case Overview

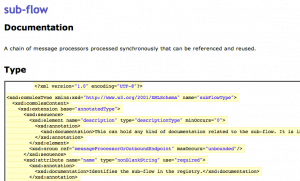

Have you ever worked on one of those large SOA projects and had to constantly browse through large sets of Xml schema describing the domain model and the messages for the services?

Those schema files could hardly be described as easy reading! Wikis on the other hand are marvellous little treasures of human knowledge, very readable because of their use of abstraction and the in-text hyperlinks inherent to their very nature. In this post I’d like to show you how we can convert a set of xml schema definitions from their not-so-friendly format into the very readable and searchable wiki text. We’ll use VQWiki for this exercise and we’ll also cheat a little by exposing only global elements as separate pages. Their type definitions should appear in-page.

Use Case Detail

Transform the entire set of Mule’s very own schemas into a VQWiki.

The index of the schemas should be transformed into the Starter page with wiki links to the individual Schema pages.

Each schema listed in the index should have its own Schema page with links to the Element pages for those elements that it declares (see above).

Each element together with its type definiton should appear in a separate Element page.

Scope restrictions

- Only versions >= 3.1 should be transformed.

- Only global elements are transformed.

- Type definitions appear as plain xml in the Element pages.

- Naming conflicts among global elements are ignored.

- Failures are ignored. Any schemas not read will appear as broken links in the wiki.

Solution Overview

A set of Flows with the following ingredients:

- Endpoints: Polling Http Inbound Endpoint, File Outbound Endpoint, VM Inbound Endpoint

- Transformation: XSLT, Java, Groovy

- Routing: All, Choice, First-Successful, Splitter, Aggregator

- Business Logic: Drools

Complexity

This problem, perhaps like many, appears rather innocent at first glance. However, the difficulties soon raise their head. First up, we can’t assume a schema will have a version 3.3. Then we have the problem of knowing when to write a Wiki page. If we’ve split a message up into separate messages, how can we know when all of those messages have passed through the subsequent processors? Using correlation criteria is the saviour here, as we are able to group messages together based upon some arbitrary group id (we could use the target namespace of the schema in this case) and then subsequently aggregate all messages that belong to the same group for writing out to file. But the most difficult problem of all consists in relating type definitions to elements. We could of course continue down the corelation path, but I can see our flows branching out into more complexity. I like solutions to be as simple as possible and there is a very attractive and powerful alternative: production rules! By passing our messages into a rules engine we can use some simple logic to group the elements and complexTypes together and then post new messages back to the bus based on the matches we find among all the messages. Enter Drools…

Drools

For those of you not familiar with knowledge bases (used to be called AI) and rules engines, you can check out the wikipedia article but in a nutshell, rules engines consist of a working memory of ‘facts’ (these can be objects or ‘messages’ in our lingo) and rules with pattern maching criteria. When the engine discovers a fact that matches the criteria stated in any of the rules, then each of those rules will be ‘fired’ and the fact is removed from the working memory.

Mule has out-of-the-box support for declaring business logic as a set of Drools rules. Rules are very easy to use! They are as simple as saying:

- WHEN I see a car, THEN do this…

- WHEN I see a blue car, THEN do this…

- WHEN I see a blue car AND I see a man AND the man is driving that same car, THEN do this…

Easy! In our exercise we were able to use one simple rule to gather together all of the elements from across all of the schemas and relate them to their type definitions and then send a new message back to the bus based on that discovery.

- WHEN I see an Element with a type value x AND I see a ComplexType with a name value x THEN post a new message which wraps them both back to the Bus.

There is one anomaly that we have to cater for here: not all of the global elements have named types. A handful come with anonymous types. To handle this scenario we simply need to find those global elements that have no ‘type’ attribute, like so:

These two rules saved me a hell of alot of correlation logic on my flows. Keep it simple Stupid, as they say!

Flow

So here’s the flow:

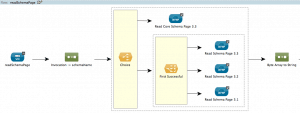

main

Schema index is read on our Http Inbound Endpoint. We transform its Html into an arbitrary Xml document using a custom Java Transformer with only the schemas found inside its anchors. We split that xml by schema name using the Message Splitter and proceed both to createIndexWiki and readSchemaPage.

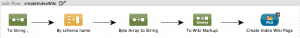

createIndexWiki

We aggregate the messages back into a single message using the Message Aggregator which we pass to the Groovy Transformer in order to prepare the wiki markup for the Starter Page which we then output to file.

readSchemaPage

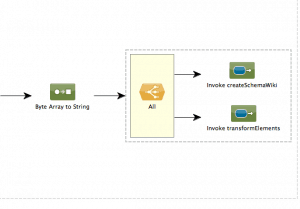

We attempt to read schemas using an Outbound Http Endpoint based on a naming convention in the url. One exception in the core schema which doesn’t begin with ‘mule-‘, which we cater for with our Choice Routing Message Processor. For all other cases we attempt to read, using the First-Successful Routing Message Processor version 3.3 and failing this, then 3.2 and failing that then 3.1 and failing all three, we simply forget about that message. We then delegate control using our All Routing Message Processor first to createSchemaWiki and then to transformElements.

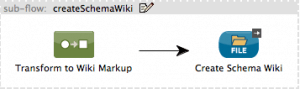

createSchemaWiki

We transform our xml from the flow above using an XSLT Transformer into the wiki markup for the Schema Page.

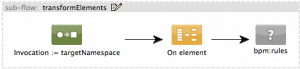

transformElements

We split the schema document into separate messages that contain either global elements or complexTypes. We then feed all of these as facts to the Drools knowledge base.

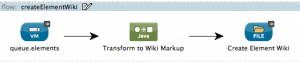

createElementWiki

This is the flow invoked by the Drools rule when it is fired. We expose it on a VM Inbound Endpoint. The messages queued there are simple model wrappers of related elements and complexTypes created and sent to Drools above. We transform these messages using a Java Transformer into the wiki markup that represents the Element Page before outputting to file through the corresponding File Outbound Endpoint..

That’s it!

With a humble set of Mule flows and one single Drools Rule, we were able to solve a difficult integration problem! Completed project source code can be seen here: https://github.com/nialdarbey/Schema2Wiki