With the advent of next generation, easy to use integration toolsets, the following is becoming a very familiar scenario.

The business has a use case to move customer records from a database (DB) to Salesforce (SFDC). A system analyst in the line of business quickly creates an integration with a connector and deploys it.

A few weeks later, the customer support team comes up with another use case to display customer data on the support web portal. So, the analyst creates another integration into the DB and SFDC.

A month later an external business partner wants access to particular customer records. So, the analyst creates another integration into the DB and SFDC.

In the above scenario, we’ve created three separate integrations to fetch the same “Customer” record for different types of consumers. When are we going to stop drilling new holes into our systems to pull out the same data!?

With configuration driven, drag and drop, zero coding, simple toolsets, it is easy for anyone to create integrations into SFDC, a DB, and an ERP whenever someone requests them. But this leads to significant technical debt. This approach takes us into another era of mad spaghetti architecture. It quickly becomes a governance nightmare for IT, where it’s tough to keep track of all these direct connections into systems of record and troubleshoot in case of failures. Management wonders why we are spending so much time and effort building and can’t deliver projects faster.

Let’s take a different approach where IT can still have governance over data but they can get scale by allowing the system analysts in the various business teams to quickly configure their business process.

In previous posts, we created integration projects that gave access to customer and account data. There, IT teams established initial connectivity and quickly exposed them as governed, reusable APIs. These became assets that can now be shared with the line of business system analyst who can create a business-specific process by orchestrating these reusable APIs.

The following example can be downloaded from Anypoint Exchange.

Prerequisite:

For this example, we will be using APIs that we built out in our previous articles, a customer REST API and an account SOAP webservice. The complete projects for these APIs can be downloaded from Exchange: customer API and account SOAP webservice. You can configure and deploy them to a Mule runtime on-premise or iPaaS. Once the APIs start up, they can be published to a private branch in Anypoint Exchange.

Use case:

Let’s use an example where the line of business wants to create a portfolio of their customers and list all the account types they have with the bank.

Steps:

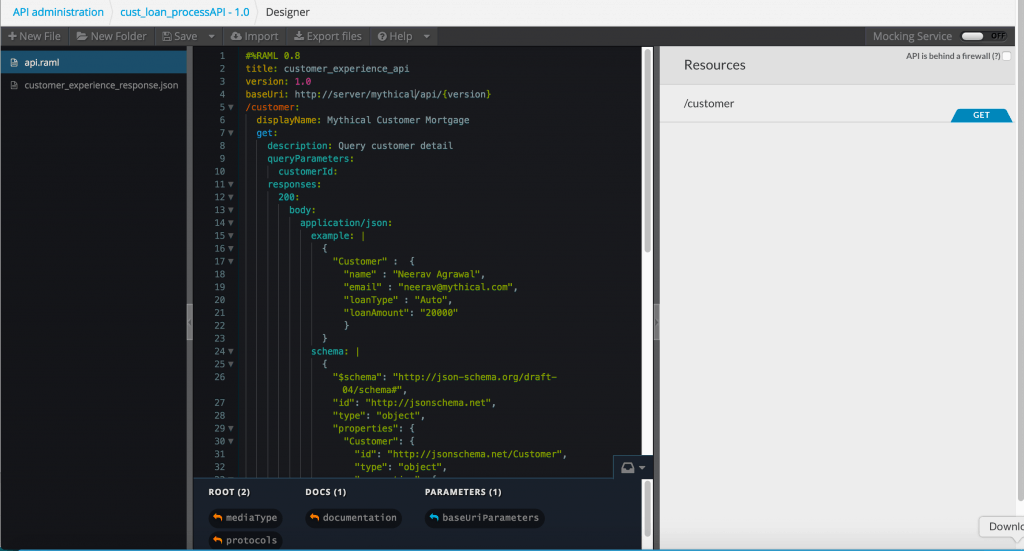

Based on the requirements, we will create a RAML spec that shows the sample request and response for this use case. Check out the sample RAML here.

Looking at the sample RAML you will notice that we need a few data elements from “Customer” and “Account” objects.

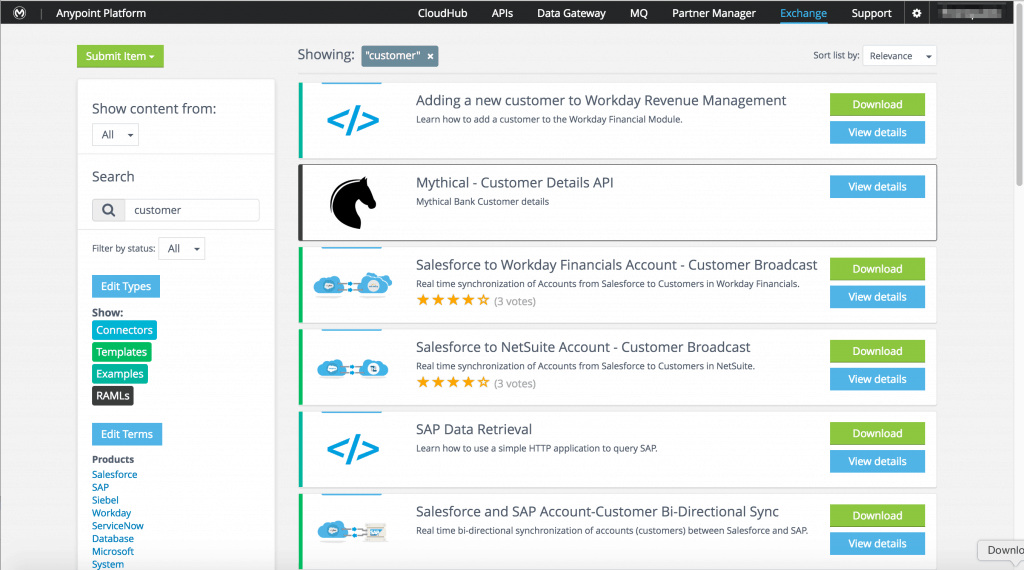

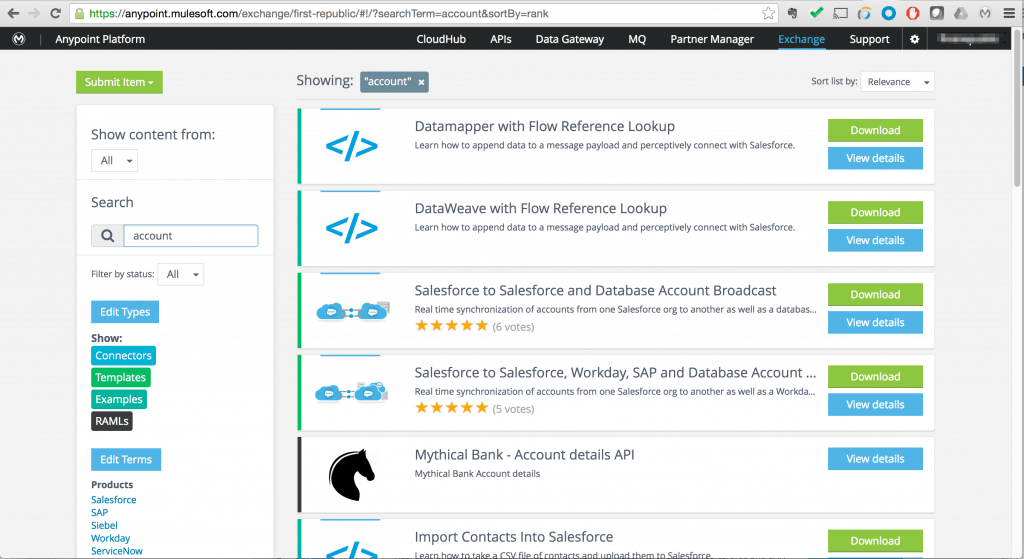

Before we go on the path of creating a brand new integration, let’s check if there is anything we can reuse from previous projects. The analyst will log into Anypoint Exchange, which has a public and private repository for assets like connectors, templates, examples, and APIs. If you created entries in the private repo of Anypoint Exchange then typing in “Customer” or “Account” will bring up assets from the private repo for internal APIs–the same APIs that were created as part of an earlier project.

Instead of creating a new integration and drilling another hole into our database, we will leverage the same connectivity built for a previous use case. Follow this guide for creating a private entry in Anypoint Exchange.

We need to aggregate the data from these two APIs and map it into a customer portfolio JSON response.

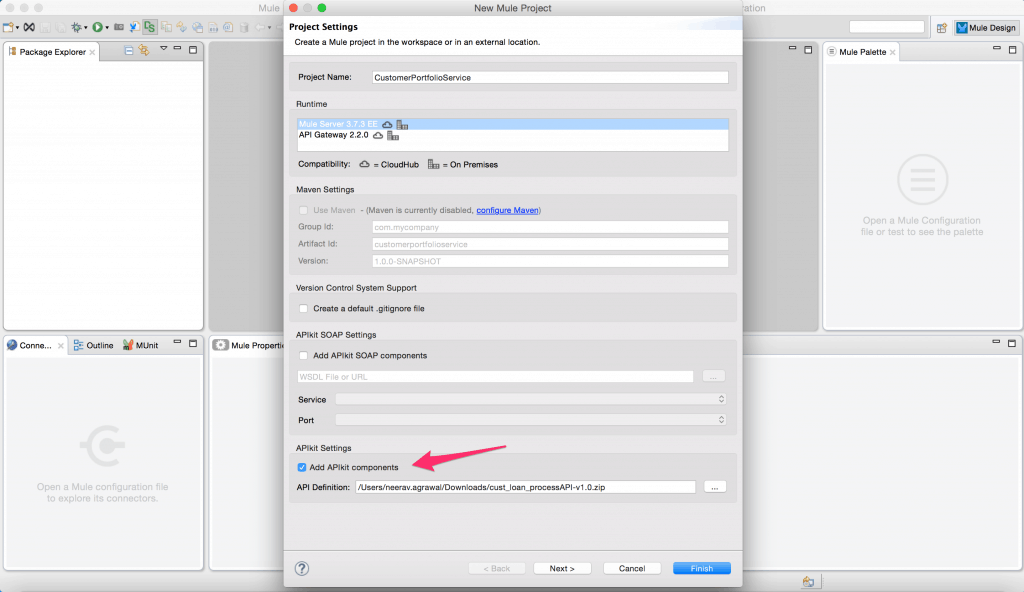

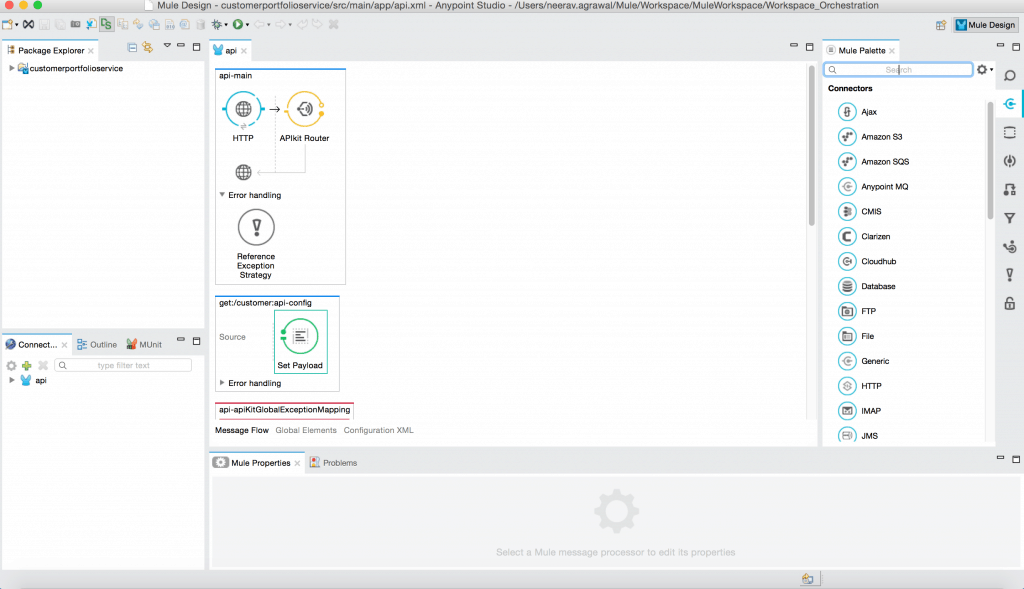

For this, open up Anypoint Studio and create a new project. Give it a name and point it to the RAML we created earlier for customer portfolio usecase.

This creates the scaffolding project.

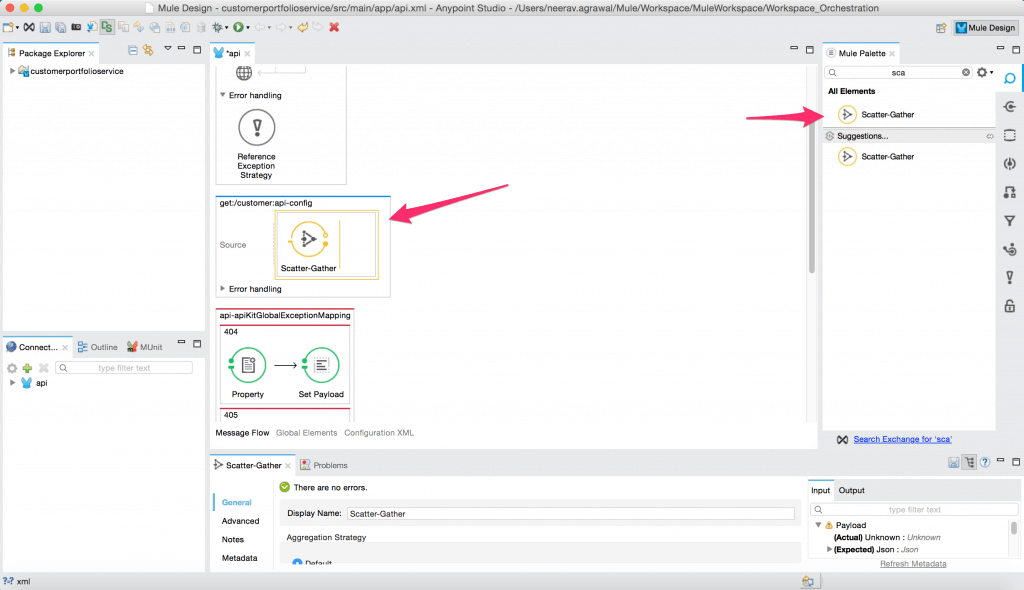

To create an aggregation we use the scatter gather pattern. Drag and drop it onto the canvas.

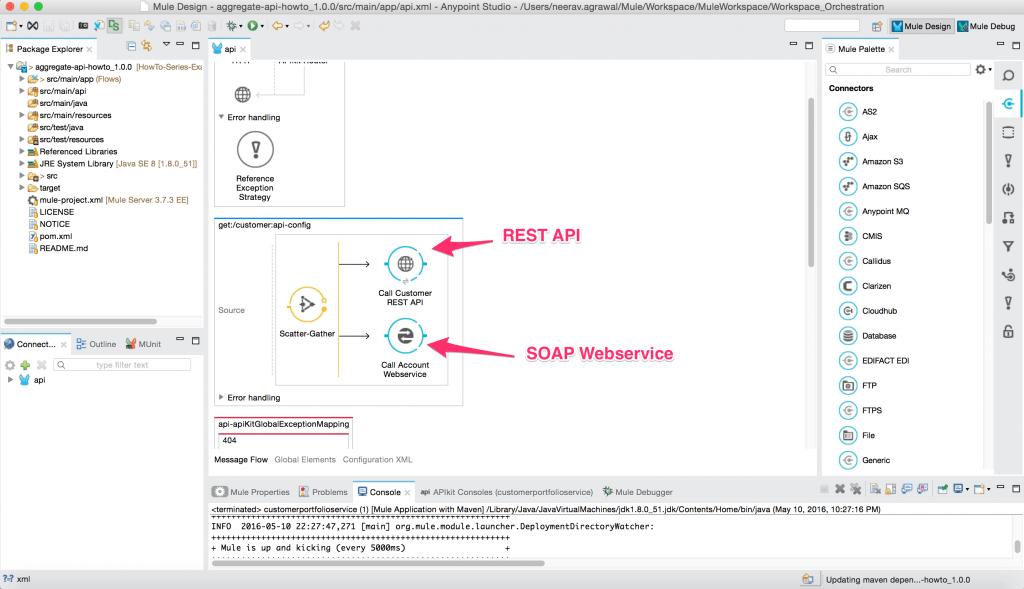

In it we assemble the API calls that we discovered from our existing assets.

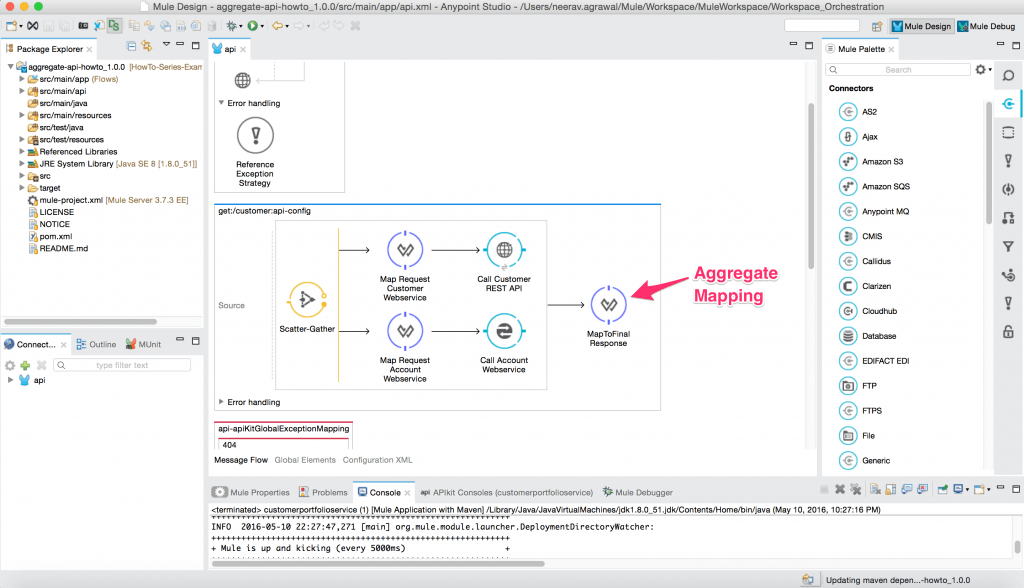

We will finally add the transform components in to map the API requests and aggregate response from each of these APIs.

Notice this project will not only solve the current use case, but also become a reusable API that can be now be leveraged for future use cases.

The above example project can be downloaded from Anypoint Exchange.

Finally, we see that we have one connection that has now been leveraged across the enterprise.

The business is happy because they can concentrate on new projects focusing on how to get data out of their complex backend systems.

IT is happy because the enterprise is consuming data out of a single governed connection point.

Management is happy because they see better ROI on technology and faster go-to-market on project delivery.

Simplicity and ease of use coupled with disciplined methodology can deliver the exponential growth in productivity that stands the test of time.