4 ways to externalize MuleSoft logs to the Elastic Stack

The former Netflix Architect Allen Wang posted back in 2015 on SlideShare: “Netflix is a logging company that occasionally steams video.” Five years ago,

JSON logging in Mule 4: Logs just got better

This is the second in a series about JSON logging. If you want to customize the output data structure you can check part 1

How to use DataWeave to read XML

At some point while developing a Mule application, it’s almost inevitable that you’ll need to manipulate XML. In this article, I will teach you

Top 5 blog posts of January 2019

One month into 2019 and we’ve already covered a wide range of content – from JSON logging in Mule 4 to work automation and

JSON logging in Mule 4: Getting the most out of your logs

This is a sequel to my previous blog post about JSON logging for Mule 3. In this blogpost, I’ll touch upon the re-architected version

Mule 4: Migrating DevKit projects to the new Mule SDK

A few days ago, we proudly announced the GA release of Mule 4 and Studio 7, a major evolution of the core runtime behind

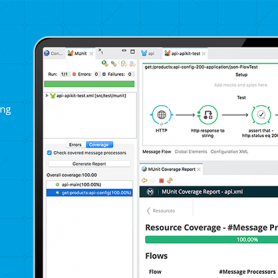

Easier assertions for XML and JSON in MUnit

This is a guest blog from a member of our developer community. Dr. Roger Butenuth is a Senior Java Consultant at codecentric, he has

JSON logging in Mule: How to get the most out of your logs

Logging is arguably one of the most neglected tasks on any given project. It's not uncommon for teams to consider logging as a second-class

How Automatic Streaming in Mule 4 Beta Works

Streaming in Mule 4 is now as easy as drinking beer! There are incredible improvements in the way that Mule 4 enables you to

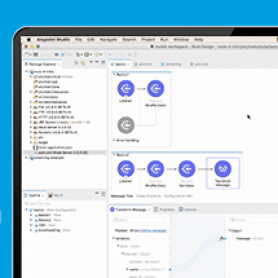

Training Talks: How to Process Flat Files in Anypoint Studio

Today you'll meet the newest member of our Training Talks series, Mark Nguyen. Mark joined the training team in November of 2016 as a