As you may have heard, Mule 3 has undergone a streamlining of its internal architecture. It’s now my job to explain what’s changed, why and what this means to you. I can’t promise it will be as exciting as a children’s movie but I will attempt to explain things as clearly as possible so that everyone can understand the concepts which in turn will help you use Mule 3 to its fullest.

This series of posts should be very useful both for existing Mule users wanting to understand the changes in Mule 3 and also for people who want to learn more about how Mule can be used to satisfy integration and messaging needs.

In this first blog post I’ll be taking a step back, away from Mule and its architecture and internals. We’ll remind ourselves what integration and messaging are all about, before going on to talk about the type of architecture that should ideally be used for integration or message-processing projects or frameworks.

What is Integration?

Integration, often called “Enterprise Application Integration“, is simply the interconnection of more than one system that needs to share information such that each system has the right data at the right time. Integration is hugely important as different systems are no longer used in isolation but are rather used together. This has been the case for quite a time in the enterprise and can now even be seen in other spheres of life with the convergence of social platforms, business applications and even governmental systems.

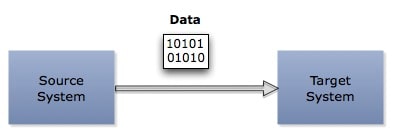

There are different ways to integrate but the commonality you will find in all of them is that there is a source system that produces the data, a data format of some kind and other systems, let’s call them target systems, that either consume or observe (listen to) the data produced by the source system. The systems that consume this data may then in turn produce other data that will be consumed or observed by a third system, or even multiple systems, and so forth.

This is integration; the concept sounds simple but there are a number of challenges:

- Protocol: Are all the systems to be integrated living in a nice homogenous environment where all communications use a single protocol?

- Time: Is it safe to assume that the target system is always available? If that’s not the case, is it only processing data at certain times of day?

- Data Format: Do all systems, whether internal or external, new or old, on Java, .NET or other platforms, use the same data format?

- Non-functional Requirements: We also need to take performance, scalability, availability, reliability and security into account as well as maintainability and extensibility.

These challenges along with with the Fallacies of Distributed Computing, which must always been taken into account, are what makes Enterprise Application Integration so much fun 🙂

Enter Messaging

Messaging deals with integration and its complexities using the following concepts:

- A Message is an abstraction for the data that travels between systems, abstracting both its data format and content.

- A Message Channel is an abstraction for the way in which two systems communicate with each other abstracting both the protocol used and its implementation. A Message Channel may allow Messages to be sent asynchronously.

- A Message Endpoint allows systems to communicate with other systems via Message Channels, abstracting the system from the Message Channel used as well as the data format used by the channel and the target system.

- A Message Transformer is used to transform a Message from one data format to another.

- A Message Router allows the target system to be resolved by the messaging system.

- A Messaging System provides the infrastructure required to create or define both Message Channels and Message Endpoints as well as specifying which Message Transformers and Message Routers are required and where.

For a more thorough discussion of these concepts I recommend you read the Enterprise Integration Patterns book.

These concepts and abstractions help simplify some of the complexities of integration. Message endpoints shield applications from the details of the “protocol” used, Message Translators allow the “data format” to be converted by the messaging system without the source or destination systems knowing about it, Message Routers can be used to choose the correct target system either at runtime or at configuration time without the source and destination systems needing to be hard-wired to each other. Moreover, because of the nature of messaging and the ability to send message asynchronously, we can decouple source and target systems in terms of “time”.

Another big advantage of Messaging is the ability to build solutions that are easily testable, modifiable and extensible. The ability to do this though depends on the architecture used by you, or the framework you use, when designing and implementing your solution.

Note: I talk about systems but everything applies equally to the integration of unique services in the same or multiple systems

An architecture for Messaging

Why is the architecture of integration solutions so important? The best way to answer this question is to think about the ways in which you might need to modify or extend your solution:

- Incorporate a new system that has a different data format and/or protocol.

- Move a service to a different data-center or to the cloud.

- Add validation, auditing or monitoring without affecting the existing solution

- Provision additional instances of a system/service to assure availability or manage load.

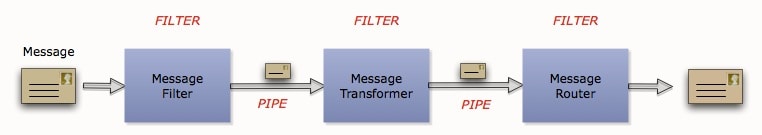

The best way in which we can achieve these requirements though an easily modifiable and extensible solutions is by using the “Pipes and Filters” architectural style.

The key thing about this architectural style is that each and every Filter has a single identical interface. This allows integration solutions to be constructed very easily by simply building message flows from endpoints, translators, routers and other moving parts of the integration platform.

The nature of the Pipes and Filters style allows for easy composition of filters, transparent interception of filters by adding additional filters in between others, as well a things like transparent proxying of filter implementations.

So what’s all this got to do with Mule 3 Architecture?

Our main driving motivation for almost all of what we have done with Mule 3 has been all about Power to the User and Simplicity. Over the next few weeks and months you’ll be able to read more about these improvements and new features in Mule 3 along with example usage.

In order to do everything we wanted to do with Mule 3 we needed to ensure that Mules core message processing architecture was as decoupled and flexible as possible, and we did this by reviewing our existing architecting and improving it to more consistently use the Pipes and Filters architectural style. These changes simplified some parts of Mules internals and improved testability but most importantly laid the foundation for the following:

- Simpler pattern based configuration, dramatically reduces the learning curve and xml verbosity for the most common use cases.

- A much more flexible flow based configuration approach that allows you to simply build message flows, block by block as your design dictates. I’ll introduce this in a upcoming blog post.

- Other higher level of abstractions that will come in the future like the new Graphical Mule IDE.

In the next blog post I’ll return to Mule and talk about how the Pipes and Filters architectural style has been implemented in Mule 3.